Developmental psychologists say that most nine-month-olds are just learning how to roll over and utter familiar sounds.* But nine months in, the SciCheck fact-checking channel is standing upright and — to the delight of its proud parents — loudly challenging the politicians it catches toying with science.

SciCheck is part of FactCheck.org, a 12-year-old journalism project run by the Annenberg Public Policy Center at the University of Pennsylvania. The new science-oriented feature launched in January to expose “false and misleading scientific claims.” With a new Congress and a competitive presidential campaign, there’s been no shortage of material — from the impact of medical marijuana and genomic research to the environmental consequences of volcanoes and barbeques.

The initial funding for SciCheck came in the form of a $102,000 grant from the Stanton Foundation, a philanthropic organization created in the name of longtime CBS president Frank Stanton and his wife. Now, as part of a new $150,000 grant, the foundation will keep SciCheck in business through the upcoming election year.

The initial funding for SciCheck came in the form of a $102,000 grant from the Stanton Foundation, a philanthropic organization created in the name of longtime CBS president Frank Stanton and his wife. Now, as part of a new $150,000 grant, the foundation will keep SciCheck in business through the upcoming election year.

Annenberg Director Kathleen Hall Jamieson has said she got the idea for SciCheck during the early stages of the 2012 presidential race, after hearing Republican candidate Michele Bachmann make false claims about the HPV vaccine. Four years later, when Republicans Rand Paul and Carly Fiorina suggested that vaccines pose unproven dangers, SciCheck was there to call them out.

The science section’s sole fact-checker is Dave Levitan. He was a freelance writer for nearly ten years after earning a masters in journalism from New York University, where he also received a certificate in science, health and environmental reporting. SciCheck is Levitan’s first job as a staff member. “The biggest transition was just going into an office everyday,” he said. “I’d been used to working from home.”

While other big-time national fact-checkers — such as PolitiFact and the Washington Post Fact Checker — occasionally check scientific claims, Factcheck.org has “a dedicated area of a site and a dedicated person” to focus on those topics, Levitan explained. “I don’t cover much besides science.”

SciCheck closely follows the usual FactCheck.org format for challenging a politicians’ statement. Unlike the fact-checkers that examine both true and false claims, SciCheck only reviews statements it suspects are false or misleading.

Levitan translates scientific studies and evidence into more accessible terms to confute the politicians — a process that can send their spokespeople and spin doctors toggling from Politico to Scientific American.

Scientific topics can draw in a different kind of reader than a typical political fact-check. “It may be a different, specific group that’s interested in science, but SciCheck really is just part of the site, focused on scientific topics,” Levitan said. “I write a little bit differently than someone writing about jobs or immigration, but that’s just the nature of the topic, not necessarily a focus on the audience.”

Despite SciCheck’s nonpartisan status, a majority of its fact-checks have been about Republican claims. Of the 35 stories published so far, only five focused on Democrats’ false or misleading statements, while 25 concentrated on Republicans’. (The remaining posts included a fact-check that covered statements from both parties and video recaps of previous stories).

Levitan’s boss and FactCheck.org’s director, Eugene Kiely, said he is not concerned about the disparity. “Generally speaking, we don’t keep score,” Kiely said. “Our job is to give voters the facts and counter partisan misinformation. We apply the exact same standards of accuracy to claims made by each side, and let the chips fall where they may.”

Levitan attributed the disparity to the Republican presidential candidates’ domination of media coverage. “I think a big part of it is that there are more Republican candidates, so they do a lot of campaign events,” he noted. That means “there are just more opportunities” to get things wrong.

“We are nonpartisan and will cover absolutely anything either party says,” Levitan said. “So if they get science wrong, I’ll cover it.”

Twelve SciCheck stories to date focused specifically on climate change — nine on Republicans’ claims and three on Democrats’. On that issue, Levitan said, “It does seem like there’s more skepticism among politicians than there is even among their constituents.”

The truth is, climate change — like many scientific questions SciCheck covers — is a partisan issue. A 2015 study conducted by the Pew Research Center, for instance, found that 71 percent of Americans who lean Democratic believe global warming is due to human activity, compared to 27 percent of those who lean Republicans.

For fact-checkers, separating the science from the politics and putting claims in context is important. “Sometimes what we write about isn’t necessarily that you got a number wrong but is that you’re spinning a given fact to fit your narrative,” Levitan said.

To broaden its audience, FactCheck.org is seeking new distribution outlets for SciCheck’s works, with stories already picked up by Discover Magazine, EcoWatch.org and the Consortium of Social Science Associations. “I expect that we will expand that further during the 2016 presidential campaign, since we typically get more traffic and attention in presidential years,” said FactCheck.org’s Kiely.

Answers in science aren’t always clear cut. But with SciCheck coming of age this election cycle, voters may have a better guide to help them sort science fact from science fiction.

______________________

* We checked.

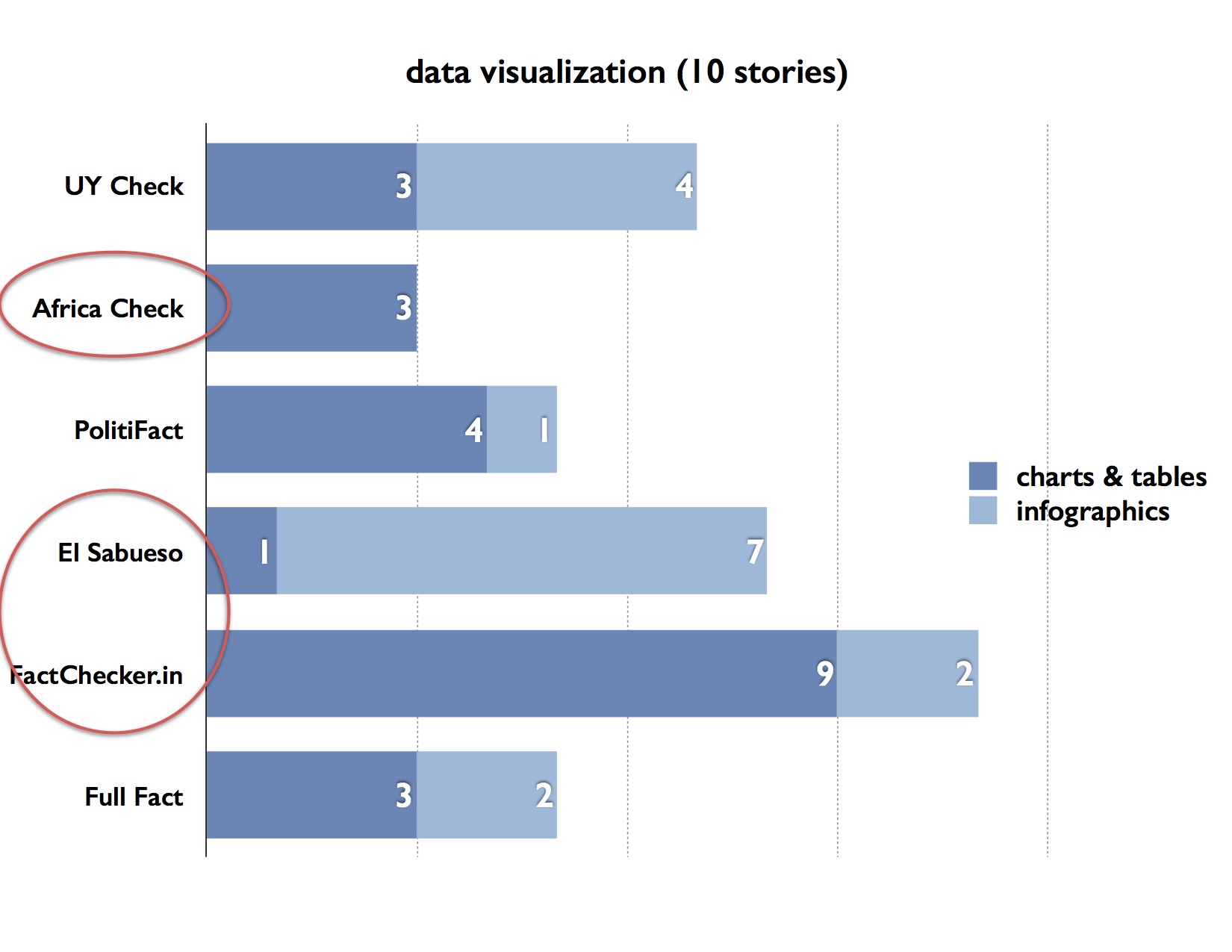

Graves and his researchers found a surprising range in the length of the fact-checking articles. UYCheck from Uruguay had the longest articles, with an average word count of 1,148, followed by Africa Check at 1,009 and PolitiFact at 983.

Graves and his researchers found a surprising range in the length of the fact-checking articles. UYCheck from Uruguay had the longest articles, with an average word count of 1,148, followed by Africa Check at 1,009 and PolitiFact at 983.