Wikipedia editors adopt stronger rules against AI-generated content

By Bill Adair - March 23, 2026

Wikipedia editors have strengthened the site’s rules against AI-generated content.

The new guideline, which was overwhelmingly supported in a discussion involving more than 40 editors, says content generated by large language models cannot be used in new or existing articles. The previous guideline, which many editors had viewed as a placeholder, only prohibited AI to generate new articles from scratch.

The new guideline also clarifies when it is okay for editors to use LLMs. It says editors can use them “to suggest refinements to their own writing, and to incorporate some of them after human review, provided the LLM doesn’t introduce content of its own.”

Ilyas Lebleu, a Wikipedia editor who proposed the new guideline, said it addresses the growing problems the site is having with humans and AI agents using LLMs to create content.

In early March, a suspected bot named TomWikiAssist authored several articles and edited other pages, according to an account in The Wikipedian, a Substack newsletter.

“An AI agent can just run wild 24 hours per day,” Lebleu said. “It can cause disruption at a scale that is much larger than what a human editor can achieve, even with the help of LLMs.”

Hannah Clover, an editor who was Wikimedian of the Year in 2024, praised the new guideline because it is simple and clear. “LLM text has been really frowned upon for a while, but it’s good to have that officially be the case,” Clover said.

Barkeep49, an influential editor who wrote an early essay predicting Wikipedia’s challenges with AI, also praised the rule.

“It’s important to Wikipedians that our content be written by humans,” said Barkeep49, who, like many editors, prefers not to disclose his real name. “This new guideline, while certainly being more prohibitive than the previous guideline, still recognizes that large language models have utility. My greater hope is that we can get our readers to understand the advantages of a human- rather than AI-written encyclopedia.”

But David Lovett, a veteran editor who writes about Wikipedia in his Edit History Substack, said there’s more work to do. “The less AI-generated content the better. The Internet is already awash with slop. Wikipedia should do everything it can to stay clean. I like the spirit of this rule but I don’t think it’s strict enough.”

2025 census: Fact-checkers persevere as politicians, platforms turn up heat

The number of fact-checking projects has remained roughly consistent in recent years, with a slight decrease so far in 2025

By Erica Ryan - June 19, 2025

By the numbers:

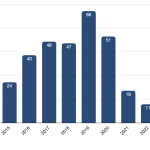

- The Duke Reporters’ Lab counts 443 active fact-checking projects around the world in 2025, down about 2 percent so far from last year. However, the number of projects has remained largely consistent in recent years, hovering around 450.

- The slight decline comes as social media giant Meta, which partners with fact-checkers to issue independent rulings on misinformation, ended that program in the U.S.

- The volume of fact-check articles this year decreased by about 6 percent compared with 2024, based on our tally of ClaimReview, a tagging system used by many fact-checking organizations.

- Fact-checkers are still active in 116 countries and in more than 70 languages. Nearly 80 fact-checking projects operate in countries judged particularly dangerous for journalism by Reporters Without Borders.

- Even in the U.S., where Meta has stopped its partnerships, new fact-checkers continue to spring up. One nationwide effort has signed up three new local newsrooms this year and has plans for four more in the near future.

After years of weeding through the ever-growing tangle of misinformation, journalists and researchers who call out falsehoods were dealt a blow in 2025 that could reshape the landscape of fact-checking around the world.

In January, just before President Donald Trump was inaugurated for a second time, Meta announced an end to its fact-checking program in the U.S., which paid professionals to issue independent rulings on potential misinformation posted on its platforms, including Facebook and Instagram. Fact-checkers around the world feared the move could lead to similar cutbacks elsewhere.

But despite the fallout of the U.S. decision, which has created a difficult environment for raising money from foundations and other big donors, there has yet to be a dramatic decline. In its annual census, the Duke Reporters’ Lab counts 443 active fact-checking projects, down about 2 percent so far from the 451 active at the end of 2024. And new projects on the horizon, including newsrooms slated to join the Gigafact fact-checking effort in the U.S., could nudge the final count upward for the year.

Overall, the net number of fact-checking projects around the globe has remained largely consistent in recent years, even as social media platforms have shifted toward less content moderation in the wake of billionaire Elon Musk acquiring Twitter in 2022.

As happens every year, they come and go. In 2024, 13 projects got their start and 13 ended, making a net of zero, and in 2023, 17 opened their doors and 19 closed, a decrease of 2.

Still, the boom years seem to be over. The relative stability in the overall number of projects follows many years of massive growth in the industry. When the Reporters’ Lab first started tracking more than a decade ago, each year brought dozens of new fact-checkers, with the total number of projects shooting up from 110 in 2014 to 453 by the end of 2022, an increase of more than 300 percent.

But so far in 2025, fact-checking has seen a slight decline.

In February, the American broadcast group Tegna ended its centralized VERIFY initiative, putting a message on its website directing users to visit their local Tegna stations for VERIFY content. In April, conservative publication The Daily Caller also stopped updating its Check Your Fact initiative.

But another well-known conservative site, The Dispatch, vowed to continue, despite the end to Meta’s fact-checking program in the U.S.

“[We] did not get into the fact-checking business because of Meta’s program, or because we thought it would make us a lot of money. We got into the fact-checking business because we think it’s important,” wrote CEO and editor Steve Hayes. “…We’re not under any illusion that our decision to continue our fact-checking operation is going to save American democracy or the journalism industry. But we’re going to keep doing it because the truth matters, even if some of the loudest and most powerful voices in politics, business, and media are trying to tell you it doesn’t.”

The slight decline in fact-checking so far in 2025 is also reflected in the number of articles being produced. In the first five months of 2024, about 40,500 articles were tagged with ClaimReview, a system to identify fact-checks in search results and apps that is used by many of the world’s fact-checking organizations. During that same period this year, that number was down to about 38,000.

The future of funding

Meta’s decision to end its third-party fact-checking program in the U.S. (and replace it with a user-driven system like X’s Community Notes) has sparked concerns that the company would do the same thing in the rest of the world.

That would have a devastating impact on fact-checking organizations that have become reliant on the company for financial support. Nearly 160 projects from our count were listed as participating in Meta’s fact-checking program in early 2025 — about a third of the active projects around the world.

The percentage was higher among signatories to the International Fact-Checking Network’s Code of Principles, which holds fact-checkers to standards of nonpartisanship and transparency.

In an IFCN survey released in March, almost two-thirds of respondents reported participating, and on average, those organizations reported receiving about 45 percent of their revenue from Meta last year.

Forging ahead

Overall, our numbers show fact-checkers remain active in 116 countries and more than 70 languages.

While Asia has seen slight declines each year since 2022, the number of projects continued to inch up for longer in Europe, which had an increase from 131 at the end of 2022 to 139 by the end of 2024. The number of projects held almost steady during that time frame in Africa (with 66-67 projects) and South America (with 38-39).

Many fact-checkers are continuing to do their work in hostile environments.

About 80 of the world’s active fact-checking organizations operate in countries that have been judged by Reporters Without Borders’ Press Freedom Index to be particularly dangerous for practicing journalism. Those places include India, Hong Kong, Bangladesh and Egypt, all of which have multiple fact-checking projects. There are also lone fact-checking outposts in countries like Iran, Cuba, Saudi Arabia and Cambodia.

And even though Meta has backed away from funding fact-checking in the U.S., new operations continue to spring up.

Three newsrooms — Maine Trust for Local News, South Dakota News Watch and Suncoast Searchlight in Florida — have all begun participating this year in the Gigafact project, an effort that helps local journalists produce bite-sized “fact briefs” that give yes or no answers about factual claims.

That brings Gigafact’s network to more than a dozen newsrooms across the country — and it expects to add four more local news organizations to its roster in June.

About the Reporters’ Lab and its Census

The Duke Reporters’ Lab began tracking the international fact-checking community in 2014, when director Bill Adair organized a group of about 50 people who gathered in London for what became the first Global Fact meeting. Subsequent Global Facts led to the creation of the International Fact-Checking Network and its Code of Principles.

The Reporters’ Lab and the IFCN use similar criteria to keep track of fact-checkers, but use somewhat different methods and metrics. Here’s how we decide which fact-checkers to include in the Reporters’ Lab database and census reports. If you have questions, updates or additions, please contact Erica Ryan.

Previous Fact-Checking Census Reports

Note: The Reporters’ Lab regularly updates our counts as we identify and add new sites to our fact-checking database. As a result, numbers from earlier census reports differ from year to year.

- April 2014

- January 2015

- February 2016

- February 2017

- February 2018

- June 2019

- June 2020

- June 2021

- June 2022

- June 2023

- June 2024

Locking up misinfo in the Reporters’ Lab vault

New tool quarantines fact-checked social media posts for journalists

By Erica Ryan - December 3, 2024

Fact-checkers around the world now have access to a new tool from the Duke Reporters’ Lab that allows them to save and link to social media posts that have been fact-checked, without boosting traffic to the original creator.

The new feature is part of MediaVault, an archiving platform created by the Reporters’ Lab with support from the Google News Initiative, and it was developed in response to feedback from fact-checkers. In addition to being able to save the social media posts they were fact-checking, journalists said they wanted to be able to link to the content without amplifying it.

Public-facing links are now generated for all social media posts archived in MediaVault, and those links can be used in fact-check articles.

Not only does the tool allow fact-checkers to avoid boosting views for creators of misinformation, it also preserves long-term access to the content. That means there will still be a record of exactly what a post claimed, even if its creator deletes it or the social media platform removes it.

Since the Reporters Lab announced the new feature in late October, MediaVault has seen a significant boost in users, with more than 200 new sign-ups.

Being able to archive social media posts is a problem the fact-checkers at India Today have been struggling with for a long time, says Bal Krishna, who leads the fact-checking team at the news organization. “This is just amazing,” he says of MediaVault.

MediaVault is free for use by fact-checkers, journalists and others working to debunk misinformation shared online, but registration is required.

Reporters’ Lab discontinues FactStream app

Fact-checking app will cease updating at the end of 2024

By Erica Ryan - December 3, 2024

After six years of showcasing the work of U.S. fact-checkers, the FactStream app will cease updating as of December 31, 2024. The Duke Reporters’ Lab has decided to discontinue the iOS app because of a lack of resources to maintain it.

More than 56,000 people have downloaded the free app since its launch in 2018. FactStream aggregated the work of PolitiFact, FactCheck.org and The Washington Post to provide a one-stop source for fact-checking of U.S. political figures and social media posts.

Originally conceived as a platform for real-time fact-checking of live events like political debates, the app was transformed into a daily resource for users at the suggestion of Eugene Kiely of FactCheck.org. The app was built by Reporters’ Lab lead technologist Christopher Guess and the Durham, N.C., design firm Registered Creative.

The FactStream app was one of the many uses of ClaimReview, a tagging system created by the Reporters’ Lab in partnership with Google and the fact-checking community. FactStream users can continue to follow a feed of fact-checks from around the world using ClaimReview by checking out Google’s Fact Check Explorer.

We also encourage FactStream users to follow PolitiFact, FactCheck.org and The Washington Post’s Fact Checker using their own websites and social media feeds.

Researchers mine Fact-Check Insights data to explore many facets of misinfo

The dataset from the Duke Reporters’ Lab has been downloaded hundreds of times

By Erica Ryan - June 20, 2024

Researchers around the world are digging into the trove of data available from our Fact-Check Insights project, with plans to use it for everything from benchmarking the performance of large language models to studying the moderation of humor online.

Since launching in December, the Fact-Check Insights dataset has been downloaded more than 300 times. The dataset is updated daily and made available at no cost to researchers by the Duke Reporters’ Lab, with support from the Google News Initiative.

Fact-Check Insights contains structured data from more than 240,000 claims made by political figures and social media accounts that have been analyzed and rated by independent fact-checkers. The dataset is powered by ClaimReview and MediaReview, twin tagging systems that allow fact-checkers to enter standardized data about their fact-checks, such as the statement being checked, the speaker, the date, and the rating.

Users have found great value in the data.

Marcin Sawiński, a researcher in the Department of Information Systems at the Poznań University of Economics and Business in Poland, is part of a team using ClaimReview data for a multiyear project aimed at developing a tool to assess the credibility of online sources and detect false information using AI.

“With nearly a quarter of a million items reviewed by hundreds of fact-checking organizations worldwide, we gain instant access to a vast portion of the fact-checking output from the past several years,” Sawiński writes. “Manually tracking such a large number of fact-checking websites and performing web data extraction would be prohibitively labor-intensive. The ready-made dataset enables us to conduct comprehensive cross-lingual and cross-regional analyses of fake-news narratives with much less effort.”

The OpenFact project, which is financed by the National Center for Research and Development in Poland, uses natural language processing and machine learning techniques to focus on specific topics.

“Shifting our efforts from direct web data extraction to the cleanup, disambiguation, and harmonization of ClaimReview data has significantly reduced our workload and increased our reach,” Sawiński writes.

Other researchers who have downloaded the dataset plan to use it for benchmarking the performance of large language models for fact-checking uses. Others are investigating the response to false information by social media platforms.

Ariadna Matamoros-Fernández, a senior lecturer in digital media in the School of Communication at Queensland University of Technology in Australia, plans to use the Fact-Check Insights dataset as part of her research into identifying and moderating humor on digital platforms.

“I am using the dataset to find concrete examples of humorous posts that have been fact-checked to discuss these examples in interviews with factcheckers,” Matamoros-Fernández writes. “I am also using the dataset to use examples of posts that have been flagged as being satire, memes, humour, parody etc to test whether different foundation models (GPT4/Gemini) are good at assessing these posts.”

The goals of her research include trying to “better understand the dynamics of harmful humour online” and creating best practices to tackle them. She has received a Discovery Early Career Researcher Award from the Australian Research Council to support her work.

Rafael Aparecido Martins Frade, a doctoral student working with the Spanish fact-checking organization Newtral, plans to utilize the data in his research on using AI to tackle disinformation.

“I am currently researching automated fact-checking, namely multi-modal claim matching,” he writes of his work. “The objective is to develop models and mechanisms to help fight the spread of fake news. Some of the applications we’re planning to work on are euroscepticism, climate emergency and health.”

Researchers who have downloaded the Fact-Check Insights dataset have also provided the Reporters’ Lab with feedback on making the data more usable.

Enrico Zuccolotto, a master’s degree student in artificial intelligence at the Polytechnic University of Milan, performed a thorough review of the dataset, offering suggestions aimed at reducing duplication and filling in missing data.

While the data available from Fact-Check Insights is primarily presented in the original form submitted by fact-checking organizations, the Reporters’ Lab has made small attempts to enhance the data’s clarity, and we will continue to make such adjustments where feasible.

Researchers who have questions about the dataset can refer to the “Guide to the Data” page, which includes a table outlining the fields included, along with examples (see the “What you can expect when you download the data” section). The Fact-Check Insights dataset is available for download in JSON and CSV formats.

Access is free for researchers, journalists, technologists and others in the field, but registration is required.

Related: What exactly is the Fact-Check Insights dataset?

What exactly is the Fact-Check Insights dataset?

Get details about data that can aid your misinformation research

By Erica Ryan - June 20, 2024

Since its launch in December, the Fact-Check Insights dataset has been downloaded hundreds of times by researchers who are studying misinformation and developing technologies to boost fact-checking.

But what should you expect if you want to use the dataset for your work?

First, you will need to register. The Duke Reporters’ Lab, which maintains the dataset with support from the Google News Initiative, generally approves applications within a week. The dataset is intended for academics, researchers, journalists and/or fact-checkers.

Once you are approved, you will be able to download the dataset in either CSV or JSON format.

Those files include the metadata for more than 200,000 fact-checks that have been tagged with ClaimReview and/or MediaReview markup.

The two tagging systems — ClaimReview for text-based claims, MediaReview for images and videos — are used by fact-checking organizations across the globe. ClaimReview summarizes a fact-check, noting the person and claim being checked and a conclusion about its accuracy. MediaReview allows fact-checkers to share their assessment of whether a given image, video, meme or other piece of media has been manipulated.

The Reporters’ Lab collects ClaimReview and MediaReview data when it is submitted by fact-checkers. We filter the data to include only reputable fact-checking organizations that have qualified to be listed in our database, which we have been publishing and updating for a decade. We also work to reduce duplicate entries, and standardize the names of fact-checking organizations. However, for the most part, the data is presented in its original form as submitted by fact-checking organizations.

Here are the fields that you can expect to be included in the dataset, along with examples:

ClaimReview

| CSV Key | Description | Example Value |

|---|---|---|

| id | Unique ID for each ClaimReview entry | 6c4f3a30-2ec1-4e2e-9b57-41ad876223e5 |

| @context | Link to schema.org, the home of ClaimReview | https://schema.org |

| @type | Type of schema being used | ClaimReview |

| claimReviewed | The claim/statement that was assessed by the fact-checker | Marsha Blackburn “voted against the Reauthorization of the Violence Against Women Act, which attempts to protect women from domestic violence, stalking, and date rape.” |

| datePublished | The date the fact-check article was published | 10/9/18 |

| url | The URL of the fact-check article | https://www.politifact.com/truth-o-meter/statements/2018/oct/09/taylor-swift/taylor-swift-marsha-blackburn-voted-against-reauth/ |

| author.@type | Type of author | Organization |

| author.name | The name of the fact-checking organization that submitted the fact-check | PolitiFact |

| author.url | The main URL of the fact-checking organization | http://www.politifact.com |

| itemReviewed.@type | Type of item reviewed | Claim |

| itemReviewed.author.name | The person or group that made the claim that was assessed by the fact-checker | Taylor Swift |

| itemReviewed.author.@type | Type of speaker | Person |

| itemReviewed.author.sameAs | URLs that help establish the identity of the person or group that made the claim, such as a Wikipedia page (rarely used) | https://www.taylorswift.com/ |

| reviewRating.@type | Type of review | Rating |

| reviewRating.ratingValue | An optional numerical value assigned to a fact-checker’s rating. Not standardized. (Note: 1.) The ClaimReview schema specifies the use of an integer for the ratingValue, worstRating and bestRating fields. 2.) For organziations that use ratings scales (such as PolitiFact), if the rating chosen falls on the scale, the numerical rating will appear in the ratingValue field. 3.) If the rating isn’t on the scale (ratings that use custom text, or special categories like Flip Flops), the ratingValue field will be empty, but worstRating and bestRating will still appear. 4.) For organizations that don’t use ratings that fall on a numerical scale, all three fields will be blank.) |

8 |

| reviewRating.alternateName | The fact-checker’s conclusion about the accuracy of the claim in text form — either a rating, like “Half True,” or a short summary, like “No evidence” | Mostly True |

| author.image | The logo of the fact-checking organization | https://d10r9aj6omusou.cloudfront.net/factstream-logo-image-61554e34-b525-4723-b7ae-d1860eaa2296.png |

| itemReviewed.name | The location where the claim was made | in an Instagram post |

| itemReviewed.datePublished | The date the claim was made | 10/7/18 |

| itemReviewed.firstAppearance.url | The URL of the first known appearance of the claim | https://www.instagram.com/p/BopoXpYnCes/?hl=en |

| itemReviewed.firstAppearance.type | Type of content being referenced | Creative Work |

| itemReviewed.author.image | An image of the person or group that made the claim | https://static.politifact.com/CACHE/images/politifact/mugs/taylor_swift_mug/03dfe1b483ec8a57b6fe18297ce7f9fd.jpg |

| reviewRating.ratingExplanation | One to two short sentences providing context and information that led to the fact-checker’s conclusion | Blackburn voted in favor of a Republican alternative that lacked discrimination protections based on sexual orientation and gender identity. But Blackburn did vote no on the final version that became law. |

| itemReviewed.author.jobTitle | A title or description of the person or group that made the claim | Mega pop star |

| reviewRating.bestRating | An optional numerical value representing what rating a fact-checker would assign to the most accurate content it assesses. See note on “reviewRating.ratingValue” field above. | 10 |

| reviewRating.worstRating | An optional numerical value representing what rating a fact-checker would assign to the least accurate content it assesses. See note on “reviewRating.ratingValue” field above. | 0 |

| reviewRating.image | An image representing the fact-checker’s rating, such as the Truth-O-Meter | https://static.politifact.com/politifact/rulings/meter-mostly-true.jpg |

| itemReviewed.appearance.1.url to itemReviewed.appearance.15.url | A URL where the claim appeared. This field has been limited to the first 15 URLs submitted for the stability of the CSV. See the JSON download for complete “appearance” data. | https://www.instagram.com/p/BopoXpYnCes/?hl=en |

| itemReviewed.appearance.1.@type to itemReviewed.appearance.15.@type | Type of content being referenced | CreativeWork |

MediaReview

| CSV Key | Description | Example Value |

|---|---|---|

| id | Unique ID for each MediaReview entry | 2bfe531d-ff53-40f5-8114-a819db22ca8b |

| @context | Link to schema.org, the home of MediaReview | https://schema.org |

| @type | Type of schema being used | MediaReview |

| datePublished | The date the fact-check article was published | 2020-07-02 |

| mediaAuthenticityCategory | The fact-checker’s conclusion about whether the media was manipulated, ranging from “Original” to “Transformed” (More detail) | Transformed |

| originalMediaContextDescription | A short sentence explaining the original context if media is used out of context | In this case, there was no original context. But this is a text field. |

| originalMediaLink | Link to the original, non-manipulated version of the media (if available) | https://example.com/ |

| url | The URL of the fact-check article that assesses a piece of media | https://www.politifact.com/factchecks/2020/jul/02/facebook-posts/no-taylor-swift-didnt-say-we-should-remove-statue-/ |

| author.@type | Type of author | Organization |

| author.name | The name of the fact-checking organization | PolitiFact |

| author.url | The URL of the fact-checking organization | http://www.politifact.com |

| itemReviewed.contentUrl | The URL of the post containing the media that was fact-checked | https://www.facebook.com/photo.php?fbid=10223714143346243&set=a.3020234149519&type=3&theater |

| itemReviewed.startTime | Timestamp of video edit (in HH:MM:SS format) | 0:01:00 |

| itemReviewed.endTime | Ending timestamp of video edit, if applicable (in HH:MM:SS format) | 0:02:00 |

| itemReviewed.@type | Type of media being reviewed | ImageObject / VideoObject / AudioObject |

Please note that not every fact-check will contain data for every field.

For the JSON version of the table above, please see the “What you can expect when you download the data” section of the Guide on the Fact-Check Insights website. The Guide page also contains tips for working with the ClaimReview and MediaReview data.

If you continue to have questions about the Fact-Check Insights dataset, please reach out to hello@factcheckinsights.org.

Related: Researchers mine Fact-Check Insights data to explore many facets of misinfo

With half the planet going to the polls in 2024, fact-checking sputters

Referees are hard to come by in a globe-spanning election year that desperately needs more fact-checking

By Mark Stencel, Erica Ryan and - May 30, 2024

This year’s elections are a global convergence of ballots, checkboxes and thumb prints, with voters in more than 64 countries and all of the European Union heading to the polls. Based on a Time magazine estimate, this democratic spectacle could potentially involve the equivalent of 49% of the planet’s population.

Just one problem: There aren’t enough referees.

For most of the past decade and a half, the global fact-checking community served that role, and experienced years of rapid growth. But based on the most recent counts from the Duke Reporters’ Lab, the number of reporting and research teams that routinely intercept political lies and disinformation is plateauing — exactly at the time when the world needs fact-checkers most.

Our latest count showed as many as 457 fact-checking projects in 111 countries were active over the past two years.

But so far in 2024, that number has shrunk to 439.

The runup to June’s sprawling E.U. vote and this fall’s U.S. election campaign may nudge the numbers upward slightly. But a slight upswing is nothing like fact-checking’s rocket-like ascent over the past 15 years.

When PolitiFact won a Pulitzer Prize for its U.S. fact-checking in 2009, the award signaled the potential of this important new form of journalism. At that time, we counted 17 sites that consistently did similar work.

By 2016, the year of the U.K’s final Brexit referendum and Donald J. Trump’s White House win, the count was 190 — an 11-fold increase.

Four years later, during the first year of the COVID-19 pandemic and Trump’s failed reelection campaign, the revised tally for 2020 more than doubled to 421.

An abrupt shift

In the years since our Lab began producing this annual census in 2014, fact-checking has become an international enterprise — quickly growing the community on six of the seven continents. Until it didn’t.

In Asia, for instance, the numbers of fact-checking operations and bureaus jumped from 94 in 2019 to 124 in 2021 — a 32% increase. And much the same happened in Africa, where 32 fact-checkers in 2019 became 54 in 2021 — up 69%.

But the pace dramatically leveled off over the following years. From 2021 to 2023, the range in Asia’s fact-checking count was between 124 and 130. In Africa, the number hovered around 55.

The leveling off started earlier in other parts of the world. From 2020 to 2023, the range in Europe was between 120 and 135.

The number in the Americas decreased. From 2020 to 2023, South America went from 44 to 39 while North America went from 94 to 90.

The flatter Earth

Our conversations with fact-checkers indicate a few factors contributed to the flattening.

Like every industry and institution, fact-checkers had to manage their teams through the pandemic. And in the early days of COVID-19, they also had to rejigger their organizations to cover the slow-motion disaster. That meant adding health reporting to beats that more typically focused on a mix of politics, hoaxes and digital scams.

Fact-checkers, like other journalists, also have struggled to raise money to pay their operating costs.

Atop those challenges, a certain amount of turnover was common in the fact-checking business. Teams in one place would decide to close up shop, often for financial reasons. At the same time, new fact-checkers would start their own ventures.

For most of the past decade, the number of new fact-checking operations far outnumbered the ones that had shut down — often by wide margins. In one year, 2017, the ratio was 11-to-1, with 55 new fact-checkers and five that closed down.

Then there was last year: 2023 was the first time there were more departing fact-checking teams than new ones — 18 closures to 10 starts.

Roughing the refs

Perhaps the biggest challenge some fact-checking projects face has less to do with the fundamentals of running a newsroom or a research team. It’s about the vitriol that fact-checkers encounter from hostile governments and politicians, as well as their supporters

From Bangladesh to Brazil, scurrilous attempts to undermine fact-checking are familiar tactics, especially for the reporters and researchers who work in countries where press freedom hardly exists.

Based on data from Reporters Without Borders’ recently released World Press Freedom Index, 69 fact-checking organizations in the Reporters’ Lab list are based in about 20 countries with situations that are rated “very serious.” Starting from the most serious places, those fact-checkers operate in Syria (1), Afghanistan (2), Iran (1), China (5), Myanmar (2), Egypt (4), Iraq (2), Cuba (1), Belarus (1), Saudi Arabia (1), Bangladesh (5), Azerbaijan (1), India (26), Turkey (3), Venezuela (3), Yemen (2), Pakistan (2), Cambodia (1), Sri Lanka (5) and Sudan (1). The list from Reporters Without Borders also counts Palestine, where there are at least three fact-checking teams. Palestine is between Turkey and Venezuela in the Index.

Even in less dangerous places, politicians have enlisted companies and institutions to use economic pressure against fact-checkers.

The fact-checking community saw multiple examples in 2023. In South Korea, conservative politicians pressured the country’s leading search engine, Naver, to cancel its financial support for SNU FactCheck — a project of the Seoul National University Institute of Communications Research. Since 2017, SNU FactCheck’s director, EunRyung Chong, worked with her country’s leading media organizations to showcase fact-checking reports using a shared system for rating questionable claims — especially the claims of politicians. Naver not only dropped its financial support, but it also took away SNU FactCheck’s access to the search company’s audience. Ultimately, the European Climate Foundation, a nonpartisan organization based in the Netherlands, stepped in to provide support that allowed SNU FactCheck and its media partners to continue their work — including coverage of last month’s National Assembly election.

In Australia, conservative politicians were more successful in derailing a years-long partnership between the country’s ABC News network and RMIT University. Egged on by the conservative outlets of News Corp Australia, lawmakers attacked the reporting of RMIT ABC Fact Check based on claims of bias and misuse of public funds. ABC has since announced its plans to sever the collaboration with the university and launch a new program aimed at misinformation.

Agence France-Presse is one of the largest fact-checking organizations, with teams posted in dozens of bureaus around the world. The French news agency lists most of the participating journalists — but not all. “While we endeavour to be as transparent as possible about our staff, some countries and environments are more hostile than others when it comes to journalism,” the list notes. “For that reason, some of the journalists on our… team will not be named below to protect their safety. ”

[June 6, 2024: This article was updated to better characterize the reasons that led to the end of the RMIT ABC Fact Check partnership after seven years.]About the Reporters’ Lab and its Census

The Duke Reporters’ Lab began tracking the international fact-checking community in 2014, when director Bill Adair organized a group of about 50 people who gathered in London for what became the first Global Fact meeting. Subsequent Global Facts led to the creation of the International Fact-Checking Network and its Code of Principles.

The Reporters’ Lab and the IFCN use similar criteria to keep track of fact-checkers, but use somewhat different methods and metrics. Here’s how we decide which fact-checkers to include in the Reporters’ Lab database and census reports. If you have questions, updates or additions, please contact Mark Stencel, Erica Ryan or Joel Luther.

Previous Fact-checking Census Reports

Note: The Reporters’ Lab regularly updates our counts as we identify and add new sites to our fact-checking database. As a result, numbers from earlier census reports differ from year to year.

- April 2014

- January 2015

- February 2016

- February 2017

- February 2018

- June 2019

- June 2020

- June 2021

- June 2022

- June 2023

9th Street Journal to use AI to generate local public service journalism

AI to bridge local news gap with launch of The 10th Street Journal

By - May 20, 2024

The 9th Street Journal, a local news publication published by students in the DeWitt Wallace Center for Media & Democracy, has begun using artificial intelligence to fill gaps in local journalism.

Called The 10th Street Journal, the new project uses the power of AI to generate stories from news releases and other reliable sources. As local newsrooms have downsized, service journalism stories — articles about road construction, event announcements and trash pickups — have often been eliminated.

Utilizing AI allows for quicker and more efficient news production. Every day, The 10th Street Journal will publish a few short stories with news from Durham, such as:

- Government actions affecting daily life, such as road closures, airport updates and activities in local parks.

- Announcements of upcoming government board and commission meetings.

- Community events, including festivals and celebrations.

Each story will be reviewed by a human editor for content, accuracy and style before they are published. They will carry the 10th Street byline and a disclaimer indicating they were created using AI.

Bill Adair, editor of The 9th Street Journal, and Alison Jones, managing editor, recognize the uncertainties of AI in journalism and acknowledge the need for a human touch.

“But we also believe it can be harnessed to fill a void in local journalism,” they wrote. “We are excited to be on the leading edge of this new effort.”

Duke lab gives fact-checkers, researchers new tools to thwart misinformation

Powerful global databases offer new insights into political claims and hoaxes, and help debunk manipulated images and video

By Duke Reporters' Lab - December 15, 2023

Researchers and journalists covering the battle against misinformation have a powerful new tool in their arsenal — a groundbreaking collection of fact-checking data that covers tens of thousands of harmful political claims and online hoaxes.

Fact-Check Insights, a comprehensive global database from the Duke Reporters’ Lab that launches this week, contains structured data from more than 180,000 claims from political figures and social media accounts that have been analyzed and rated by independent fact-checkers. The project was created with support from the Google News Initiative.

The Fact-Check Insights database is powered by ClaimReview — which has been called the world’s most successful structured journalism project — and its sibling MediaReview. The twin tagging systems allow fact-checkers to enter standardized data about their fact-checks, such as the statement being fact-checked, the speaker, the date, and the rating.

“ClaimReview and MediaReview are the secret sauce of fact-checking,” said Bill Adair, director of the Reporters’ Lab and the Knight professor of journalism and public policy at Duke. “This data will give researchers a much easier way to study how politicians lie, where false information spreads, and other vital topics so we can better combat misinformation.”

This rich, important dataset is updated daily, summarizing articles from dozens of fact-checkers around the world, including well-known organizations such as FactCheck.org, PesaCheck, Factly, Full Fact, Chequeado and Pagella Politica. It is ready-made for download in JSON and CSV formats. Access is free for researchers, journalists, technologists and others in the field, but registration is required.

Archiving tool for fact-checkers

Along with Fact-Check Insights, the Reporters’ Lab is launching MediaVault, a unique and unprecedented tool for fact-checkers who are working to debunk manipulated images and videos shared around the world.

MediaVault is a cutting-edge system that collects and stores images and videos that have been analyzed by reputable fact-checking organizations. The MediaVault archive allows fact-checkers to maintain a vital portion of their work, which would otherwise disappear when posts are removed from social media platforms. It also enables quicker research and identification of previously published images and videos in misleading social media posts.

“MediaVault is the first archival system that is specifically tailored to the needs of fact-checkers,” Adair said. “Our team developed this system after seeing too many posts go missing after being fact-checked. We realized fact-checkers needed a custom-made solution.”

MediaVault is free for use by fact-checkers, journalists and others working to debunk misinformation shared online, but registration is required. This project also received support from the Google News Initiative.

The Reporters’ Lab team behind the Fact-Check Insights and MediaVault projects includes lead technologist Christopher Guess, project manager Erica Ryan, ClaimReview/MediaReview manager Joel Luther, and Lab co-director Mark Stencel. The team was assisted by Duke University researcher Asa Royal, along with developer Justin Reese and designer Joanna Fonte of Bad Idea Factory.

Misinformation spreads, but fact-checking has leveled off

10th annual fact-checking census from the Reporters' Lab tracks an ongoing slowdown

By Mark Stencel, Erica Ryan and - June 21, 2023

While much of the world’s news media has struggled to find solid footing in the digital age, the number of fact-checking outlets reliably rocketed upward for years — from a mere 11 sites in 2008 to 424 in 2022.

But the long strides of the past decade and a half have slowed to a more trudging pace, despite increasing concerns around the world about the impact of manipulated media, political lies and other forms of dangerous hoaxes and rumors.

In our 10th annual fact-checking census, the Duke Reporters’ Lab counts 417 fact-checkers that are active so far in 2023, verifying and debunking misinformation in more than 100 countries and 69 languages.

While the count of fact-checkers routinely fluctuates, the current number is roughly the same as it was in 2022 and 2021.

In more blunt terms: Fact-checking’s growth seems to have leveled off.

Since 2018, the number of fact-checking sites has grown by 47%. While that’s an increase of 135, it is far slower than the preceding five years, when the numbers grew more than two and a half times, or the six-fold increase over the five years before that.

There also are important regional patterns. With lingering public health issues, climate disasters, and Russia’s ongoing war with Ukraine, factual information is still hard to come by in important corners of the world.

Before 2020, there was a significant growth spurt among fact-checking projects in Africa, Asia, Europe and South America. At the same time, North American fact-checking began to slow. Since then, growth in the fact-checking movement has plateaued in most of the world.

The Long Haul

One good sign for fact-checking is the sustainability and longevity of many key players in the field. Almost half of the fact-checking organizations in the Reporters’ Lab count have been active for five years or more. And roughly 50 of them have been active for 10 years or more.

The average lifespan of an active fact-checking site is less than six years. The average lifespan of the 139 fact-checkers that are now inactive was not quite three years.

But the baby boom has ended. Since 2019, when a bumper crop of 83 fact-checkers went online, the number of new sites each year has steadily declined. The Reporters’ Lab count for 2022 is at 20, plus three additions in 2023 as of this June. That reduced the rate of growth from three years earlier by 72%.

The number of fact-checkers that closed down in that same period also declined, but not as dramatically. That means the net count of new and departing sites has gone from 66 in 2019 to 11 in 2022, plus one addition so far in 2023.

The Downshift

The Downshift

As was the case for much of the world, the pandemic period certainly contributed to the slower growth. But another reason is the widespread adoption of fact-checking by journalists and other researchers from nonpartisan think tanks and good-government groups in recent years. That has broadened the number of people doing fact-checking but created less need for news organizations dedicated to the unique form of journalism.

With teams working in 108 countries, just over half of the nations represented in the United Nations have at least one organization that already produces fact-checks for digital media, newspapers, TV reports or radio. So in some countries, the audience for fact-checks might be a bit saturated. As of June, there are 71 countries that have more than one fact-checker.

Another reason for the slower pace is that launching new fact-checking projects is challenging — especially in countries with repressive governments, limited press freedom and safety concerns for journalists …. In other words, places where fact-checking is most needed.

The 2023 World Press Freedom Index rates press conditions as “very serious” in 31 countries. And almost half of those countries (15 of 31) do not have any fact-checking sites. They are Bahrain, Djibouti, Eritrea, Honduras, Kuwait, Laos, Nicaragua, North Korea, Oman, Russia, Tajikistan, Turkmenistan, Vietnam and Yemen. The Index also includes Palestine, the status of which is contested.

Remarkably, there are 62 fact-checking services in the 16 other countries on the “very serious” list. And in eight of those countries, there is more than one site. India, which ranks 161 out of 180 in the World Press Freedom Index, is home to half of those 62 sites. The other countries with more than one fact-checking organization are Bangladesh, China, Venezuela, Turkey, Pakistan, Egypt and Myanmar.

In some cases, fact-checkers from those countries must do their work from other parts of the world or hide their identities to protect themselves or their families. That typically means those journalists and researchers must work anonymously or report on a country as expats from somewhere else. Sometimes both.

At least three fact-checking teams from the Middle East take those precautions: Fact-Nameh (“The Book of Facts”), which reports on Iran from Canada; Tech 4 Peace, an Iraq-focused site that also has members who work in Canada; and Syria’s Verify-Sy, whose staff includes people who operate from Turkey and Europe.

Two other examples are elTOQUE DeFacto, a project of a Cuban news website that is legally registered in Poland; and the fact-checkers at the Belarusian Investigative Center, which is based in the Czech Republic.

In other cases, existing fact-checking organizations have also established separate operations in difficult places. The Indian sites BOOM and Fact Crescendo have set-up fact-checking services in Bangladesh, while the French news agency Agence France-Presse (AFP) has fact-checkers that report on misinformation from Hong Kong, India and Myanmar, among others.

There still are places where fact-checking is growing, and much of that has to do with organizations that have multiple outlets and bureaus — such as AFP, as noted above. The French international news service has about 50 active sites aimed at audiences in various countries and various languages.

India-based Fact Crescendo launched two new channels in 2022 — one for Thailand and another focused broadly on climate issues. Along with two other outlets the previous year, Fact Crescendo now has a total of eight sites.

The 2022 midterm elections in the United States added six new local fact-checking outlets to our global tally, all at the state level. Three of the new fact-checkers were the Arizona Center for Investigative Reporting, The Nevada Independent and Wisconsin Watch, all of whom used a platform called Gigafact that helped generate quick-hit “Fact Briefs” for their audiences. But the Arizona Center is no longer participating. (For more about the 2022 U.S. elections see “From Fact Deserts to Fact Streams” — a March 2023 report from the Reporters’ Lab.)

About the Reporters’ Lab and Its Census

The Duke Reporters’ Lab began tracking the international fact-checking community in 2014, when director Bill Adair organized a group of about 50 people who gathered in London for what became the first Global Fact meeting. Subsequent Global Facts led to the creation of the International Fact-Checking Network and its Code of Principles.

The Reporters’ Lab and the IFCN use similar criteria to keep track of fact-checkers, but use somewhat different methods and metrics. Here’s how we decide which fact-checkers to include in the Reporters’ Lab database and census reports. If you have questions, updates or additions, please contact Mark Stencel, Erica Ryan or Joel Luther.

* * *

Related links: Previous fact-checking census reports

April 2014: https://reporterslab.org/duke-study-finds-fact-checking-growing-around-the-world/

January 2015: https://reporterslab.org/fact-checking-census-finds-growth-around-world/

February 2016: https://reporterslab.org/global-fact-checking-up-50-percent/

February 2017: https://reporterslab.org/international-fact-checking-gains-ground/

February 2018: https://reporterslab.org/fact-checking-triples-over-four-years/

June 2019: https://reporterslab.org/number-of-fact-checking-outlets-surges-to-188-in-more-than-60-countries/

June 2020: https://reporterslab.org/annual-census-finds-nearly-300-fact-checking-projects-around-the-world/

June 2021: https://reporterslab.org/fact-checking-census-shows-slower-growth/