How long should fact-checks be? How should they attribute their sources — with links or a detailed list? Should they provide a thorough account of a fact-checker’s work or distill it into a short summary?

Those are just a few of the areas explored in a fascinating new study by Lucas Graves, a journalism professor at the University of Wisconsin. He presented a summary of his research last month at the 2015 Global Fact-Checking Summit in London.

The pilot project represents the first in-depth qualitative analysis of global fact-checking. It was funded by the Omidyar Network as part of its grant to the Poynter Institute to create a new fact-checking organization. The study, done in conjunction with the Reporters’ Lab, lays the groundwork for a more extensive analysis of additional sites in the future.

The findings reveal that fact-checking is still a new form of journalism with few established customs or practices. Some fact-checkers write long articles with lots of quotes to back up their work. Others distill their findings into short articles without any quotes. Graves did not take a position on which approach is best, but his research gives fact-checkers some valuable data to begin discussions about how to improve their journalism.

Graves and three research assistants examined 10 fact-checking articles from each of six different sites: Africa Check, Full Fact in the United Kingdom, FactChecker.in in India, PolitiFact in the United States, El Sabueso in Mexico and UYCheck in Uruguay. The sites were chosen to reflect a wide range of global fact-checking, as this table shows:

Graves and his researchers found a surprising range in the length of the fact-checking articles. UYCheck from Uruguay had the longest articles, with an average word count of 1,148, followed by Africa Check at 1,009 and PolitiFact at 983.

Graves and his researchers found a surprising range in the length of the fact-checking articles. UYCheck from Uruguay had the longest articles, with an average word count of 1,148, followed by Africa Check at 1,009 and PolitiFact at 983.

The shortest were from Full Fact, which averaged just 354 words. They reflected a very different approach by the British team. Rather than lay out the factual claims and back them up with extensive quotes the way most other sites do, the Full Fact approach is to distill them down to summaries.

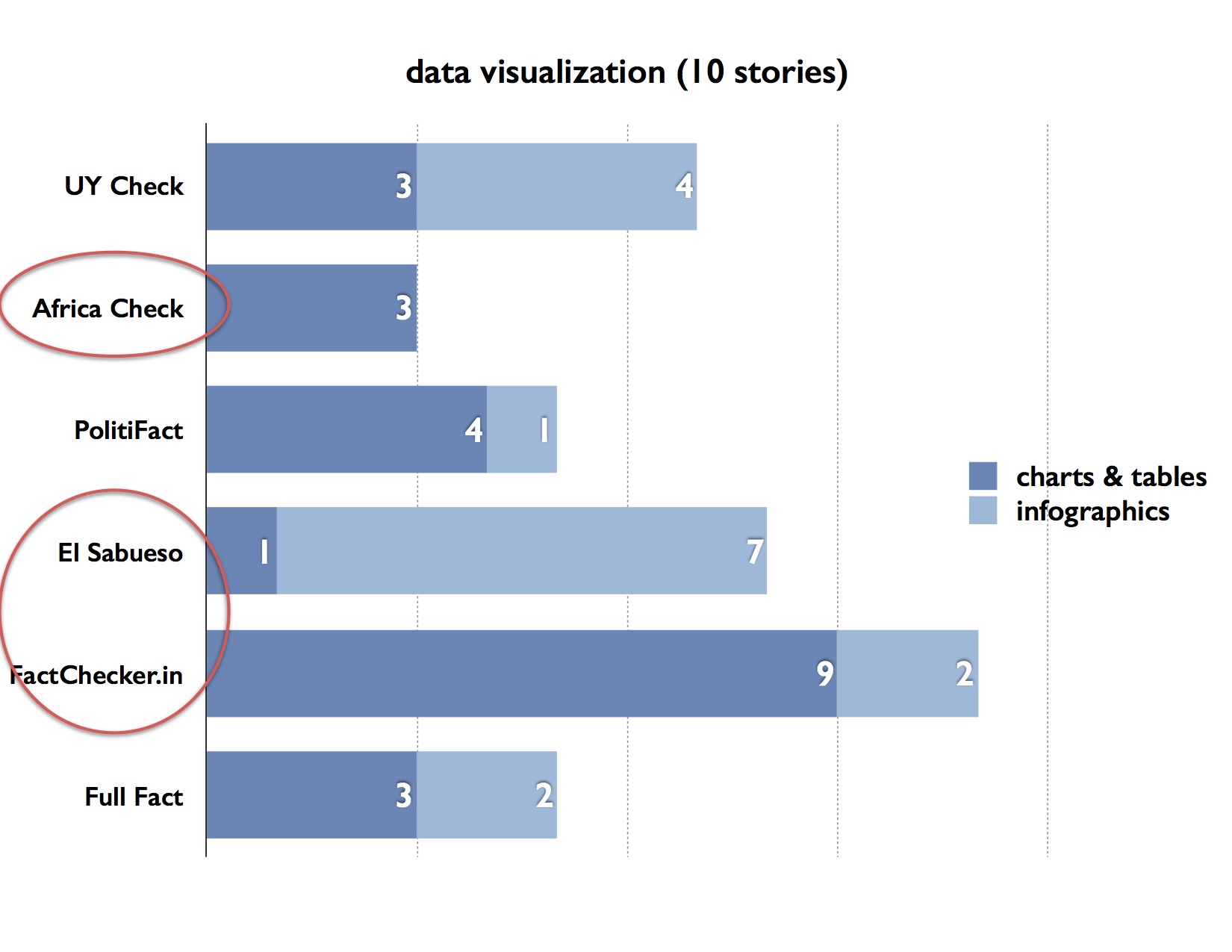

Graves also found a wide range of data visualization in the articles sampled for each site. For example, Africa Check had three data visualizations in its 10 articles, while there were 11 in the Indian site FactChecker.in.

The Latin American sites UYCheck and El Sabueso used the most infographics, while the other sites relied more on charts and tables.

Graves also found a wide range in the use of web links and quotes. Africa Check averaged the highest total of web links and quotes per story (18), followed by 12 for PolitiFact, while UYCheck and El Sabueso had the fewest (8 and 5, respectively). Full Fact had no quotes in the 10 articles Graves examined but used an average of 9 links per article.

Graves and his researchers also examined how fact-checkers use links and quotes — whether they were used to provide political context about the claim being checked, to explain the subject being analyzed or to provide evidence about whether the claim was accurate. They found some sites, such as Africa Check and PolitiFact, used links more to provide context for the claim, while UYCheck and El Sabueso used them more for evidence in supporting a conclusion.

The analysis of quotes yielded some interesting results. PolitiFact used the most in the 10 articles — 38 quotes — with its largest share from evidentiary uses. Full Fact used the fewest (zero), followed by UYCheck (23) and El Sabueso (26).

The study also examined what Graves called “synthetic” sources — the different authoritative sources used to explain an issue and decide the accuracy of a claim. This part of the analysis distilled a final list of institutional sources for each fact-check, regardless of whether sources were directly quoted or linked to. AfricaCheck led the list with almost nine different authoritative sources considered on average, more than twice as many as FactChecker.in and UYCheck. Full Fact, UYCheck, and El Sabueso relied mainly on government agencies and data, while PolitiFact and Africa Check drew heavily on NGOs and academic experts in addition to official data.

The study raises some important questions for fact-checkers discuss. Are we writing are fact-checks too long? Too short?

Are we using enough data visualizations to help readers? Should we take the time to create more infographics instead of simple charts and tables?

What do we need to do to give our fact-checks authority? Are links sufficient? Or should we also include quotes from experts?

Over the next few months, we’ll have plenty to discuss.

Comments closed