Category: Fact-Checking News

Fact-Checking News

Fact-checkers extend their global reach with 391 outlets, but growth has slowed

Efforts to intercept misinformation are expanding in more than 100 countries, but the pace of new fact-checking projects continues to slow.

By Mark Stencel, Erica Ryan and - June 17, 2022

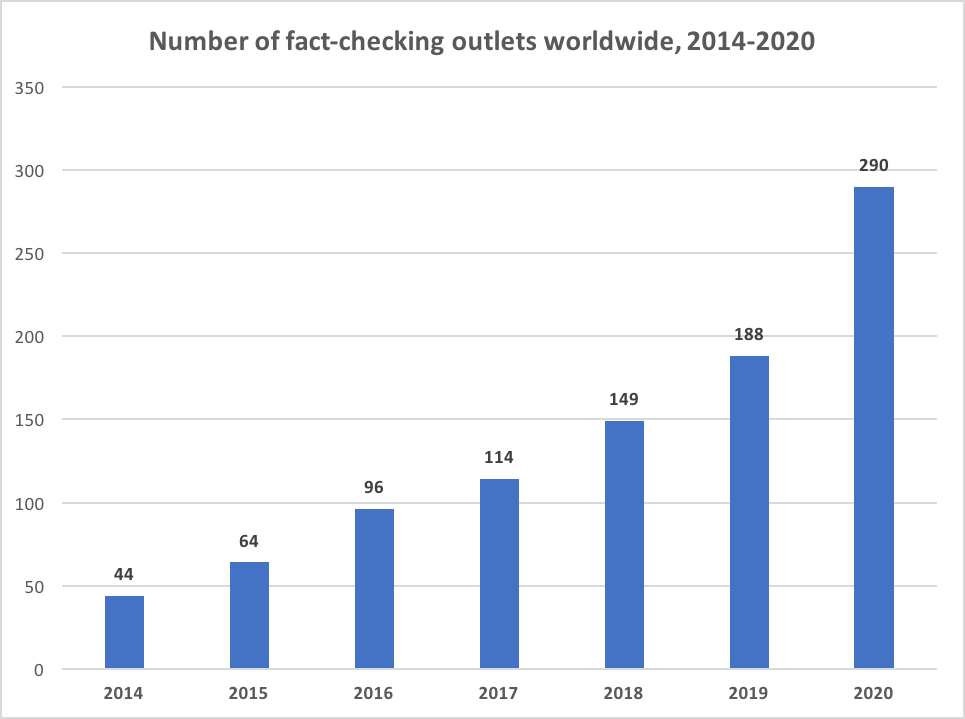

The number of fact-checkers around the world doubled over the past six years, with nearly 400 teams of journalists and researchers taking on political lies, hoaxes and other forms of misinformation in 105 countries.

The Duke Reporters’ Lab annual fact-checking census counted 391 fact-checking projects that were active in 2021. Of those, 378 are operating now.

That’s up from a revised count of 186 active sites in 2016 – the year when the Brexit vote and the U.S. presidential election elevated global concerns about the spread of inaccurate information and rumors, especially in digital media. Misleading posts about ethnic conflicts, wars, the climate and the pandemic only amplified those worries in the years since.

Since last year’s census, we have added 51 sites to our global fact-checking map and database. In that same 12 months, another seven fact-checkers closed down.

While this vital journalism now appears in at least 69 languages on six continents, the pace of growth in the international fact-checking community has slowed over the past several years.

The largest growth was in 2019, when 77 new fact-checking sites and organizations made their debut. Based on our updated counts since then, the number was 58 in 2020 and 22 last year.

New Fact Checkers by Year

(Note: The adjusted number of 2021 launches may increase over time as the Reporters’ Lab identifies other fact-checkers we have not yet discovered.)

These numbers may be a worrisome trend, or they could mean that the growth of the past several years has saturated the market – or paused in the wake of the global pandemic. But we also expect our numbers for last year to eventually increase as we continue to identify other fact-checkers, as happens every year.

More than a third of the growth since 2019’s bumper crop came from existing fact-checking operations that added new outlets to expand their reach to new places and different audiences. That includes Agence France-Presse, the French international news service, which launched at least 17 new sites in that period. In Africa, Dubawa and PesaCheck opened nine new bureaus, while Asia’s Boom and Fact Crescendo opened five. In addition, Delfi and Pagella Politica in Europe and PolitiFact in North America each launched a new satellite, too.

Fact-checking has expanded quickly over the years in Latin America, but less so of late. Since 2019 we saw three launches in South America (one of which has folded) plus one more focused on Cuba.

Active Fact-Checkers by Year

The Reporters’ Lab is monitoring another trend: fact-checkers’ use of rating systems. These ratings are designed to succinctly summarize a fact-checker’s conclusions about political statements and other forms of potential misinformation. When we analyzed the use of these features in past reports, we found that about 80-90% of the fact-checkers we looked at relied on these meters and standardized labels to prominently convey their findings.

But that approach appears to be less common among newer fact-checkers. Our initial review of the fact-checkers that launched in 2020 found that less than half seemed to be using rating systems. And among the Class of 2021, only a third seemed to rely on predefined ratings.

We also have seen established fact-checkers change their approach in handling ratings.

The Norwegian fact-checking site Faktisk, for instance, launched in 2017 with a five-point, color-coded rating system that was similar to ones used by most of the fact-checkers we monitor: “helt sant” (for “absolutely true” in green) to “helt feil” (“completely false” in red). But during a recent redesign, Faktisk phased out its ratings.

“The decision to move away from the traditional scale was hard and subject to a very long discussion and consideration within the team,” said editor-in-chief Kristoffer Egeberg in an email. “Many felt that a rigid system where conclusions had to ‘fit the glove’ became kind of a straitjacket, causing us to either drop claims that weren’t precise enough or too complex to fit into one fixed conclusion, or to instead of doing the fact-check – simply write a fact-story instead, where a rating was not needed.”

Egeberg also noted that sometimes “the color of the ratings became the main focus rather than the claim and conclusion itself, derailing the important discussion about the facts.”

We plan to examine this trend in the future and expect this discussion may emerge during the conversations at the annual Global Fact summit in Oslo, Norway, next week.

The Duke Reporters’ Lab began keeping track of the international fact-checking community in 2014, when it organized a group of about 50 people who gathered in London for what became the first Global Fact meeting. This year about 10-times that many people – 500 journalists, technologists, truth advocates and academics – are expected to attend the ninth summit. The conferences are now organized by the International Fact-Checking Network, based at the Poynter Institute in St. Petersburg, Florida. This will be the group’s first large in-person meeting in three years.

Fact-Checkers by Continent

Like their audiences, the fact-checkers are a multilingual community, and many of these sites publish their findings in multiple languages, either on the same site or in some cases alternate sites. English is the most common, used on at least 166 sites, followed by Spanish (55), French (36), Arabic (14), Portuguese (13), Korean (13), German (12) and Hindi (11).

Nearly two-third of the fact-checkers are affiliated with media organizations (226 out of 378, or about 60%). But there are other affiliations and business models too, including 24 with academic ties and 45 that are part of a larger nonprofit or non-governmental organization. Some of these fact-checkers have overlapping arrangements with multiple organizations. More than a fifth of the community (86 out of 378) operate independently.

About the census:

Here’s how we decide which fact-checkers to include in the Reporters’ Lab database. The Lab continually collects new information about the fact-checkers it identifies, such as when they launched and how long they last. That’s why the updated numbers for earlier years in this report are higher than the counts the Lab included in earlier reports. If you have questions, updates or additions, please contact Mark Stencel, Erica Ryan or Joel Luther.

Related links: Previous fact-checking census reports

MediaReview: A next step in solving the misinformation crisis

An update on what we’ve learned from 1,156 entries of MediaReview, our latest collaboration to combat misinformation.

By - June 2, 2022

When a 2019 video went viral after being edited to make House Speaker Nancy Pelosi look inebriated, it took 32 hours for one of Facebook’s independent fact-checking partners to rate the clip false. By then, the video had amassed 2.2 million views, 45,000 shares, and 23,000 comments – many of them calling her “drunk” or “a babbling mess.”

The year before, the Trump White House circulated a video that was edited to make CNN’s Jim Acosta appear to aggressively react to a mic-wielding intern during a presidential press conference.

A string of high-profile misleading videos like these in the run-up to the 2020 U.S. election stoked long-feared concerns about skillfully manipulated videos, sometimes using AI. The main worry then was how fast these doctored videos would become the next battleground in a global war against misinformation. But new research by the Duke Reporters’ Lab and a group of participating fact-checking organizations in 22 countries found that other, far less sophisticated forms of media manipulation were much more prevalent.

By using a unified tagging system called MediaReview, the Reporters’ Lab and 43 fact-checking partners collected and categorized more than 1,000 fact-checks based on manipulated media content. Those accumulated fact-checks revealed that:

- While we began this process in 2019 expecting deepfakes and other sophisticated media manipulation tactics to be the most imminent threat, we’ve predominantly seen low-budget “cheap fakes.” The vast majority of media-based misinformation is rated “Missing Context,” or, as we’ve defined it, “presenting unaltered media in an inaccurate manner.” In total, fact-checkers have applied the Missing Context rating to 56% of the MediaReview entries they’ve created.

- Most of the fact-checks in our dataset, 78%, come from content on Meta’s platforms Facebook and Instagram, likely driven by the company’s well-funded Third-Party Fact Checking-Program. These platforms are also more likely to label or remove fact-checked content. More than 80% of fact-checked posts on Instagram and Facebook are either labeled to add context or no longer on the platform. In contrast, more than 60% of fact-checked posts on YouTube and Twitter remain intact, without labeling to indicate their accuracy.

- Without reliable tools for archiving manipulated material that is removed or deleted, it is challenging for fact-checkers to track trends and bad actors. Fact-checkers used a variety of tools, such as the Internet Archive’s Wayback Machine, to attempt to capture this ephemeral misinformation; but only 67% of submitted archive links were viewable on the chosen archive when accessed at a later date, while 33% were not.

The Reporters’ Lab research also demonstrated MediaReview’s potential — especially based on the willingness and enthusiastic participation of the fact-checking community. With the right incentives for participating fact-checkers, MediaReview provides efficient new ways to help intercept manipulated media content — in large part because so many variations of the same claims appear repeatedly around the world, as the pandemic has continuously demonstrated.

The Reporters’ Lab began developing the MediaReview tagging system around the time of the Pelosi video, when Google and Facebook separately asked the Duke team to explore possible tools to fight the looming media misinformation crisis.

MediaReview is a sibling to ClaimReview, an initiative the Reporters’ Lab led starting in 2015, that sought to create infrastructure for fact-checkers to make their articles machine-readable and easily used for search engines, mobile apps, and other projects. Called “one of the most successful ‘structured journalism’ projects ever launched,” the ClaimReview schema has proven immensely valuable. Used by 177 fact-checking organizations around the world, ClaimReview has been used to tag 136,744 articles, establishing a large and valuable corpus of fact-checks: tens of thousands of statements from politicians and social media accounts around the world analyzed and rated by independent journalists.

But ClaimReview proved insufficient to address the new, specific challenges presented by misinformation spread through multimedia. Thus, in September 2019, the Duke Reporters’ Lab began working with the major search engines, social media services, fact-checkers and other interested stakeholders on an open process to develop MediaReview, a new sibling of ClaimReview that creates a standard for manipulated video and images. Throughout pre-launch testing phases, 43 fact-checking outlets have used MediaReview to tag 1,156 images and videos, again providing valuable, structured information about whether pieces of content are legitimate and how they may have been manipulated.

In an age of misinformation, MediaReview, like ClaimReview before it, offers something vital: real-time data on which pieces of media are truthful and which ones are not, as verified by the world’s fact-checking journalists.

But the work of MediaReview is not done. New fact-checkers must be brought on board in order to reflect the diversity and global reach of the fact-checking community, the major search and social media services must incentivize the creation and proper use of MediaReview, and more of those tech platforms and other researchers need to learn about, and make full use of, the opportunities this new tagging system can provide.

An Open Process

MediaReview is the product of a two-year international effort to get input from the fact-checking community and other stakeholders. It was first adapted from a guide to manipulated video published by The Washington Post, which was initially presented at a Duke Tech & Check meeting in the spring of 2019. The Reporters’ Lab worked with Facebook, Google, YouTube, Schema.org, the International Fact-Checking Network, and The Washington Post to expand this guide to include a similar taxonomy for manipulated images.

The global fact-checking community has been intimately involved in the process of developing MediaReview. Since the beginning of the process, the Reporters’ Lab has shared all working drafts with fact-checkers and has solicited feedback and comments at every step. We and our partners have also presented to the fact-checking community several times, including at the Trusted Media Summit in 2019, a fact-checkers’ community meeting in 2020, Global Fact 7 in 2020, Global Fact 8 in 2021 and several open “office hours” sessions with the sole intent of gathering feedback.

Throughout development and testing, the Reporters’ Lab held extensive technical discussions with Schema.org to properly validate the proposed structure and terminology of MediaReview, and solicited additional feedback from third-party organizations working in similar spaces, including the Partnership on AI, Witness, Meedan and Storyful.

Analysis of the First 1,156

As of February 1, 2022, fact-checkers from 43 outlets spanning 22 countries have now made 1,156 MediaReview entries.

Number of outlets creating MediaReview by country.

Number of MediaReview entries created by outlet.

Our biggest lesson in reviewing these entries: The way misinformation is conveyed most often through multimedia is not what we expected. We began this process in 2019 expecting deepfakes and other sophisticated media manipulation tactics to be an imminent threat, but we’ve predominantly seen low-budget “cheap fakes.” What we’ve seen consistently throughout testing is that the vast majority of media-based misinformation is rated “Missing Context,” or, as we’ve defined it, “presenting unaltered media in an inaccurate manner.” In total, fact-checkers have applied the Missing Context rating to 56% of the MediaReview entries they’ve created.

The “Original” rating has been the second most applied, accounting for 20% of the MediaReview entries created. As we’ve heard from fact-checkers through our open feedback process, a substantial portion of the media being fact-checked is not manipulated at all; rather, it consists of original videos of people making false claims. Going forward, we know we need to be clear about the use of the “Original” rating as we help more fact-checkers get started with MediaReview, and we need to continue to emphasize the use of ClaimReview to counter the false claims contained in these kinds of videos.

Throughout the testing process, the Duke Reporters’ Lab has monitored incoming MediaReview entries and provided feedback to fact-checkers where applicable. We’ve heard from fact-checkers that that feedback was valuable and helped clarify the rating system.

Reviewing media links that have been checked by third-party fact-checkers, a vast majority of fact-checked media thus far exists on Facebook:

Share of links in the MediaReview dataset by platform.

Facebook’s well-funded Third Party Fact-Checking Program likely contributes to this rate; fact-checkers are paid directly to check content on Facebook’s platforms, making that content more prevalent in our dataset.

We also reviewed the current status of links checked by fact-checkers and tagged with MediaReview. With different platforms having different policies on how they deal with misinformation, some of the original posts are intact, others have been removed by either the platform or the user, and some have a context label appended with additional fact-check information. By platform, Instagram is the most likely to append additional information, while YouTube is the most likely to present fact-checked content in its original, intact form, not annotated with any fact-checking information: 72.5% of the media checked from YouTube are still available in their original format on the platform.

Status of fact-checked media broken down by platform, showing the percentage of checked media either labeled with additional context, removed, or presented fully intact.

In addition, we noted that fact-checkers have often (roughly 25% of the time) input an archival link into the “Media URL” field, in an attempt to capture the link for the video or image, ephemeral misinformation that is often quickly deleted by either the platforms or the users. Notably, though, these existing archive systems are unreliable; only 67% of submitted archive links were viewable on the archive, while 33% were not. While we found that Perma.cc was the most reliable existing archiving system used by fact-checkers, it only successfully presented 80% of checked media, and its status as a paid archival tool leaves an opportunity to build a new system to preserve fact-checked media.

Success rate of archival tools used by fact-checkers in properly displaying the fact-checked media.

Next Steps

Putting MediaReview to use: Fact-checkers have emphasized to us the need for social media companies and search engines platforms to make use of these new signals. They’ve highlighted that usability testing would help ensure that MediaReview data was seen prominently on the tech platforms.

Archiving the images and videos: As noted above, current archiving systems are insufficient to capture the media misinformation fact-checkers are reporting on. Currently, fact-checkers using MediaReview are limited to quoting or describing the video or image they checked and including the URL where they discovered it. There’s no easy, consistent workflow for preserving the content itself. Manipulated images and videos are often removed by social media platforms or deleted or altered by their owners, leaving no record of how they were manipulated or presented out of context. In addition, if the same video or image emerges again in the future, it can be difficult to determine if it has been previously fact-checked. A repository of this content — which could be saved automatically as part of each MediaReview submission — would allow for accessibility and long-term durability for archiving, research, and more rapid detection of misleading images and video.

Making more: We continue to believe that fact-checkers need incentives to continue making this data. The more fact-checkers use these schemas, the more we increase our understanding of the patterns and spread of misinformation around the world — and the ability to intercept inaccurate and sometimes dangerous content. The effort required to produce ClaimReview or MediaReview is relatively low, but adds up cumulatively — especially for smaller teams with limited technological resources.

While fact-checkers created the first 1,156 entries solely to help the community refine and test the schema, further use by the fact-checkers must be encouraged by the tech platforms’ willingness to adopt and utilize the data. Currently, 31% of the links in our MediaReview dataset are still fully intact where they were first posted; they have not been removed or had any additional context added. Fact-checkers have displayed their eagerness to research manipulated media, publish detailed articles assessing their veracity, and make their assessments available to the platforms to help curb the tide of misinformation. Search engines and social media companies must now decide to use and display these signals.

Appendix: MediaReview Development Timeline

MediaReview is the product of a two-year international effort involving the Duke Reporters’ Lab, the fact-checking community, the tech platforms and other stakeholders.

Mar 28, 2019

Phoebe Connelly and Nadine Ajaka of The Washington Post first presented their idea for a taxonomy classifying manipulated video at a Duke Tech & Check meeting.

Sep 17, 2019

The Reporters’ Lab met with Facebook, Google, YouTube, Schema.org, the International Fact-Checking Network, and The Washington Post in New York to plan to expand this guide to include a similar taxonomy for manipulated images.

Oct 17, 2019

The Reporters’ Lab emailed a first draft of the new taxonomy to all signatories of the IFCN’s Code of Principles and asked for comments.

Nov 26, 2019

After incorporating suggestions from the first draft document and generating a proposal for Schema.org, we began to test MediaReview for a selection of fact-checks of images and videos. Our internal testing helped refine the draft of the Schema proposal, and we shared an updated version with IFCN signatories on November 26.

Jan 30, 2020

The Duke Reporters’ Lab, IFCN and Google hosted a Fact-Checkers Community Meeting at the offices of The Washington Post. Forty-six people, representing 21 fact-checking outlets and 15 countries, attended. We presented slides about MediaReview, asked fact-checkers to test the creation process on their own, and again asked for feedback from those in attendance.

Apr 16, 2020

The Reporters’ Lab began a testing process with three of the most prominent fact-checkers in the United States: FactCheck.org, PolitiFact, and The Washington Post. We have publicly shared their test MediaReview entries, now totaling 421, throughout the testing process.

Jun 1, 2020

We wrote and circulated a document summarizing the remaining development issues with MediaReview, including new issues we had discovered through our first phase of testing. We also proposed new Media Types for “image macro” and “audio,” and new associated ratings, and circulated those in a document as well. We published links to both of these documents on the Reporters’ Lab site (We want your feedback on the MediaReview tagging system) and published a short explainer detailing the basics of MediaReview (What is MediaReview?)

Jun 23, 2020

We again presented on MediaReview at Global Fact 7 in June 2020, detailing our efforts so far and again asking for feedback on our new proposed media types and ratings and our Feedback and Discussion document. The YouTube video of that session has been viewed over 500 times, by fact-checkers around the globe, and dozens participated in the live chat.

Apr 1, 2021

We hosted another session on MediaReview for IFCN signatories on April 1, 2021, again seeking feedback and updating fact-checkers on our plans to further test the Schema proposal.

Jun 3, 2021

In June 2021, the Reporters’ Lab worked with Google to add MediaReview fields to the Fact Check Markup Tool and expand testing to a global userbase. We regularly monitored MediaReview and maintained regular communication with fact-checkers who were testing the new schema.

Nov 10, 2021

We held an open feedback session with fact-checkers on November 10, 2021, providing the community another chance to refine the schema. Overall, fact-checkers have told us that they’re pleased with the process of creating MediaReview and that its similarity to ClaimReview makes it easy to use. As of February 1, 2022, fact-checkers have made a total of 1,156 MediaReview entries.

For more information about MediaReview, contact Joel Luther.

From Sofia to Springfield, fact-checking extends its reach

Reporters' Lab prepares for its annual fact-checking survey in advance of the Global Fact summit in Oslo

By Erica Ryan - April 19, 2022

Fact-checkers in the Gambia and Bulgaria are among the new additions to the Duke Reporters’ Lab database of fact-checking sites around the world. The total now stands at 356, with more updates to come.

FactCheck Gambia and Factcheck.bg both got their start in 2021. Fact-checkers at the Gambian site checked claims in the run-up to the country’s presidential elections in December, and they have continued to examine President Adama Barrow’s inaugural speech as well as other statements from officials and social media claims.

In Bulgaria, the initiative begun by the nonprofit Association of European Journalists-Bulgaria has checked claims ranging from a purported ban on microwaves to concerns about coronavirus vaccines, and it has received increased interest for its work during the war in Ukraine.

Other additions to the database include a couple of TV news features in the United States: the News10NBC Fact Check from WHEC-TV in Rochester, New York, and the KY3 Fact Finders at KYTV-TV in Springfield, Missouri. Both projects focus on rumors and questions from local television viewers.

This is crunch time for the Reporters’ Lab. We’re busily updating our database for the Lab’s annual fact-checking census. We plan to publish this yearly overview in June, shortly before the International Fact-Checking Network convenes its annual Global Fact summit in Oslo.

About the census: Here’s how we decide which fact-checkers to include in the Reporters’ Lab database. The Lab continually collects new information about the fact-checkers it identifies, such as when they launched and how long they last. If you have questions, updates or additions, please contact Lab co-director Mark Stencel (mark.stencel@duke.edu) and project manager Erica Ryan (elryan@gmail.com).

Reporters’ Lab Takes Part in Eighth ‘Global Fact’ Summit

The Reporters’ Lab team participated in five conference sessions and hosted a daily virtual networking table at the conference with more than 1,000 attendees.

By - November 8, 2021

The Duke Reporters’ Lab spent this year’s eighth Global Fact conference helping the world’s fact-checkers learn more about tagging systems that can extend the reach of their work; encouraging a sense of community among organizations around the globe; and discussing new research that offers potent insights into how fact-checkers do their jobs.

This year’s Global Fact took place virtually for the second time, following years of meeting in person all around the world, in cities such as London, Buenos Aires, Madrid, Rome, and Cape Town. More than 1,000 fact-checkers, academic researchers, industry experts, and representatives from technology companies attended the virtual conference.

Over three days, the Reporters’ Lab team participated in five conference sessions and hosted a daily virtual networking table.

- Reporters’ Lab director and IFCN co-founder Bill Adair delivered opening remarks for the conference, focused on how fact-checkers around the world have closely collaborated in recent years.

- Mark Stencel, co-director of the Reporters’ Lab, moderated the featured talk with Tom Rosenstiel, the Eleanor Merrill Visiting Professor on the Future of Journalism at the Philip Merrill College of Journalism at the University of Maryland and coauthor of The Elements of Journalism. Rosenstiel previously served as executive director of the American Press Institute. He discussed research into how the public responds to the core values of journalism and how fact-checkers might be able to build more trust with their audience.

- Thomas Van Damme presented findings from his master’s thesis, “Global Trends in Fact-Checking: A Data-Driven Analysis of ClaimReview,” during a panel discussion moderated by Lucas Graves and featuring Joel Luther of the Reporters’ Lab and Karen Rebelo, a fact-checker from BOOM in India. Van Damme’s analysis reveals fascinating trends from five years of ClaimReview data and demonstrates ClaimReview’s usefulness for academic research.

- Luther also prepared two pre-recorded webinars that were available throughout the conference:

- Introduction to ClaimReview: the Hidden Infrastructure of Fact-Checks — This session was designed for newcomers to ClaimReview but includes some helpful tips and information for everyone using ClaimReview for their fact-checks.

- MediaReview: A Sibling to ClaimReview to Tag Manipulated Media — This video offers an overview of how to tag images and videos, as well as some findings from the MediaReview testing we’ve done so far.

In addition, the Reporters’ Lab is excited to reconnect with fact-checkers again at 8 a.m. Eastern on Wednesday, November 10, for a feedback session on MediaReview. We’re pleased to report that fact-checkers have now used MediaReview to tag their fact-checks of images and videos 841 times, and we’re eager to hear any additional feedback and continue the open development process we began in 2019 in close collaboration with the IFCN.

Keeping a sense of community in the IFCN

For Global Fact 8, a reminder of the IFCN's important role bringing fact-checkers together.

By Bill Adair - October 20, 2021

My opening remarks for Global Fact 8 on Oct. 20, 2021, delivered for the second consecutive year from Oslo, Norway.

Thanks, Baybars!

Welcome to Norway!

(Pants on Fire!)

It’s great to be here once again among your fiords and gnomes and your great Norwegian meatballs!

(Pants on Fire!)

What….I’m not in Norway?

Well, it turns out I’m still in Durham…again!

And once again we are joined together through the magic of video and more importantly by our strong sense of community. That’s the theme of my remarks today.

Seven years ago, a bunch of us crammed into a classroom in London. I had organized the conference with Poynter because I had heard from several of you that there was a desire for us to come together. It was a magical experience that we all had in the London School of Economics. We were able to discuss our common experiences and challenges.

As I noted in a past speech, one of our early supporters, Tom Glaisyer of the Democracy Fund, gave us some critical advice when I was planning the meeting with the folks at Poynter. Tom said, “Build a community, not an association.” His point was that we should be open and welcoming and that we shouldn’t erect barriers about who could take part in our group. That’s been an important principle in the IFCN and one that’s been possible with Poynter as our home.

You can see the community every week in our email listserv. Have you looked at some of those threads? Lately they’ve helped fact-checkers find the status of COVID passports in countries around the world, learn which countries allow indoor dining and which were still in lockdown. All of that is possible because of the wonderful way we help each other.

Global Fact keeps getting bigger and bigger. It was so big in Cape Town that we needed a drone to take our group photo. At this rate, for our next-get-together, we’ll need to take the group photo from a satellite.

Tom’s advice has served us really well. By establishing the IFCN as a program within the Poynter Institute, a globally renowned journalism organization, we have not only built a community, we avoided the bureaucracy and frustration of creating a whole new organization.

We stood up the IFCN quickly, and it became a wonderfully global organization, with a staff and advisory board that represents a mix of fact-checkers from every continent — except for Antarctica (at least not yet!).

Our community succeeded in creating a common Code of Principles that may well be the only ethical framework in journalism that includes a real verification and enforcement mechanism.

The Poynter-based IFCN, with its many connections in journalism and tech, has raised millions of dollars for fact-checkers all over the world.

And we have done all this without bloated overhead, new legal entities and insular meetings that would distract us from our real work — finding facts and dispelling bullshit. For most fact-checkers, running our own organizations or struggling for resources within our newsrooms is already time-consuming enough.

As we look to the future, some fact-checkers from around the world have offered ideas at how the IFCN can improve. I like many of their suggestions.

Let’s start with the money the IFCN distributes. The fundraising I mentioned is amazing — more than $3 million since March 2020. It’s pretty cool how that gets distributed – All of that money came from major tech companies in the United States and 84% of the money goes to fact-checkers OUTSIDE the US.

But we can be even more transparent about all of that, just as IFCN’s principles demand transparency of its signatories. We can also continue to expand the advisory board to be even more representative of our growing community.

Some other improvements:

We should demand more data and transparency from our funders in the tech community. Fact-checkers also can advocate to make sure that our large tech partners treat members of our community fairly. And we can work together more closely to find new sources of revenue to pay for our work, whether that’s through IFCN or other collaborations.

One possible way is to arrange a system so fact-checkers can get paid for publishing fact-checks with ClaimReview, the tagging system that our Reporters’ Lab developed with Google and Jigsaw. (A bit of our own transparency – they supported our work on that and a similar product for images and video called MediaReview.) Our goal at Duke is to help fact-checkers expand their audiences and create new ways for you to get paid for your important work.

Our community also needs more diverse funding sources, to avoid relying too heavily on any one company or sector. But we also need to be realistic and recognize the financial and legal limitations of the funders, and of our fact-checkers, which represent an incredibly wide range of business models. Some of you have good ideas about that. And we should be talking more about all of that.

The IFCN and Global Fact provide essential venues for us to discuss these issues and make progress together – as do the regional fact-checking collaboratives and networks, from Latin America to Central Europe to Africa, and the numerous country-specific collaborations in Japan, Indonesia and elsewhere. What a dazzling movement we have built – together.

If there’s a message in all this is that all of us need to convene and talk more often. The pandemic has made that difficult. This is the second year we have to meet virtually — and like most of you, I too am sick of talking to my laptop, as I am now.

For now, though, let’s be grateful for the community we have. It’s sunny here in Norway today

[Pants on Fire!]

I’m looking forward to seeing you in person next year!

MediaReview Testing Expands to a Global Userbase

The Duke Reporters’ Lab is launching the next phase of development of MediaReview, a tagging system that fact-checkers can use to identify whether a video or image has been manipulated.

By - June 3, 2021

The Duke Reporters’ Lab is launching the next phase of development of MediaReview, a tagging system that fact-checkers can use to identify whether a video or image has been manipulated.

Conceived in late 2019, MediaReview is a sibling to ClaimReview, which allows fact-checkers to clearly label their articles for search engines and social media platforms. The Reporters’ Lab has led an open development process, consulting with tech platforms like Google, YouTube and Facebook, and with fact-checkers around the world.

Testing of MediaReview began in April 2020 with the Lab’s FactStream partners: PolitiFact, FactCheck.org and The Washington Post. Since then, fact-checkers from those three outlets have logged more than 300 examples of MediaReview for their fact-checks of images and videos.

We’re ready to expand testing to a global audience and we’re pleased to announce that fact-checkers can now add MediaReview to their fact-checks through Google’s Fact Check Markup Tool, a tool which many of the world’s fact-checkers currently use to create ClaimReview. This will bring MediaReview testing to more fact-checkers around the world, the next step in the open process that will lead to a more refined final product.

ClaimReview was developed through a partnership of the Reporters’ Lab, Google, Jigsaw, and Schema.org. It provides a standard way for publishers of fact-checks to identify the claim being checked, the person or entity that made the claim, and the conclusion of the article. This standardization enables search engines and other platforms to highlight fact-checks, and can power automated products such as the FactStream and Squash apps being developed in the Reporters’ Lab.

Likewise, MediaReview aims to standardize the way fact-checkers talk about manipulated media. The goal is twofold: to allow fact-checkers to provide information to the tech platforms that a piece of media has been manipulated, and to establish a common vocabulary to describe types of media manipulation. By communicating clearly in consistent ways, independent fact-checkers can play an important role in informing people around the world.

The Duke Reporters’ Lab has led the open process to develop MediaReview, and we are eager to help fact-checkers get started with testing it. Contact Joel Luther for questions or to set up a training session. International Fact-Checking Network signatories who have questions about the process can contact the IFCN.

For more information, see the new MediaReview section of our ClaimReview Project website.

Fact-checking census shows slower growth

The number of new projects dipped, even as fact-checking reached more countries than ever

By Mark Stencel and - June 2, 2021

Fact-checkers are now found in at least 102 countries – more than half the nations in the world.

The latest census by the Duke Reporters’ Lab identified 341 active fact-checking projects, up 51 from last June’s report.

But after years of steady and sometimes rapid growth, there are signs that trend is slowing, even though misleading content and political lies have played a growing role in contentious elections and the global response to the coronavirus pandemic.

Our tally revealed a slowdown in the number of new fact-checkers, especially when we looked at the upward trajectory of projects since the Lab began its yearly survey and global fact-checking map seven years ago.

The number of fact-checking projects that launched since the most recent Reporters’ Lab census was more than three times fewer than the number that started in the 12 months before that, based on our adjusted tally.

From July 2019 to June 2020, there were 61 new fact-checkers. In the year since then, there were 19.

Meanwhile, 21 fact-checkers shut down in that same two-year period beginning in June 2019. And 54 additions to the Duke database in that same period were fact-checkers that were already up and running prior to the 2019 census.

Looking at the count by calendar year also underscored the slowdown in the time of COVID.

The Reporters’ Lab counted 36 fact-checking projects that launched in 2020. That was below the annual average of 53 for the preceding six calendar years – and less than half the number of startups that began fact-checking in 2019. The 2020 launches were also the lowest number of new fact-checkers we’ve counted since 2014.

New Fact Checkers by Year

(Note: The adjusted number of 2020 launches may increase slightly over time as the Reporters’ Lab identifies other fact-checkers we have not yet discovered.)

The slowdown comes after a period of rapid expansion that began in 2016. That was the year when the Brexit vote in the United Kingdom and the presidential race in the United States raised public alarm about the impact of misinformation.

In response, major tech companies such as Facebook and Google elevated fact-checks on their platforms and provided grants, direct funding and other incentives for new and existing fact-checking organizations. (Disclosure: Google and Facebook fund some of the Duke lab’s research on technologies for fact-checkers. )

The 2018-2020 numbers presented below are adjusted from earlier census reports to include fact-checkers that were subsequently added to our database.

Active Fact-Checkers by Year

Note: 2021 YTD includes one fact-checker that closed in 2021.

Growth has been steady on almost every continent except in North America. In the United States, where fact-checking first took off in the early 2010s, there are 61 active fact-checkers now. That’s down slightly from the 2020 election year, when there were 66. But the U.S. is still home to more fact-checking projects than any other country. Of the current U.S. fact-checkers, more than half (35 of 61) focus on state and local politics.

Fact-Checkers by Continent

Among other details we found in this year’s census:

- More countries, more staying power: Based on our adjusted count, fact-checkers were active in at least 47 countries in 2014. That more than doubled to 102 now. And most of the fact-checkers that started in 2014 or earlier (71 out of 122) are still active today.

- Fact-checking is more multilingual: The active fact-checkers produce reports in nearly 70 languages, from Albanian to Urdu. English is the most common, used on 146 different sites, followed by Spanish (53), French (33), Arabic (14), Portuguese (12), Korean (11) and German (10). Fact-checkers in multilingual countries often present their work in more than one language – either in translation on the same site, or on different sites tailored for specific language communities, including original reporting for those audiences.

- More than media: Half of the current fact-checkers (195 of 341) are affiliated with media organizations, including national news publishers and broadcasters, local news sources and digital-only outlets. But there are other models, too. At least 37 are affiliated with non-profit groups, think tanks and nongovernmental organizations and 26 are affiliated academic institutions. Some of the fact-checkers involve cross-organization partnerships and have multiple affiliations. But to be listed in our database, the fact-checking must be organized and produced in a journalistic fashion.

- Turnover: In addition to the 341 current fact-checkers, the Reporters’ Lab database and map also include 112 inactive projects. From 2014 to 2020, an average of 15 fact-checking projects a year close down. Limited funding and expiring grants are among the most common reasons fact-checkers shuttered their sites. But there also are short-run, election year projects and partnerships that intentionally close down once the voting is over. Of all the inactive projects, 38 produced fact-checks for a year or less. The average lifespan of an inactive fact-checker is two years and three months. The active fact-checkers have been in business twice as long – an average of more than four and a half years.

The Reporters’ Lab process for selecting fact-checkers for its database is similar to the standards used by the International Fact Checking Network – a project based at the Poynter Institute in St. Petersburg, Florida. IFCN currently involves 109 organizations that each agree to a code of principles. The Lab’s database includes all the IFCN signatories, but it also counts any related outlets – such as the state-level news partners of PolitiFact in the United States, the wide network of multilingual fact-checking sites that France’s AFP has built across its global bureau system, and the fact-checking teams Africa Check and PesaCheck have mobilized in countries across Africa.

Reporters’ Lab project manager Erica Ryan and student researchers Amelia Goldstein and Leah Boyd contributed to this year’s report.

About the census: Here’s how we decide which fact-checkers to include in the Reporters’ Lab database. The Lab continually collects new information about the fact-checkers it identifies, such as when they launched and how long they last. That’s why the updated numbers for earlier years in this report are higher than the counts the Lab included in earlier reports. If you have questions, updates or additions, please contact Mark Stencel or Joel Luther.

Related Links: Previous fact-checking census reports

Fact-checking count tops 300 for the first time

The Reporters' Lab finds fact-checkers at work in 84 countries -- but growth in the U.S. has slowed

By Mark Stencel and - October 13, 2020

The number of active fact-checkers around the world has topped 300 — about 100 more than the Duke Reporters’ Lab counted this time a year ago.

Some of that growth is due to the 2020 election in the United States, where the Lab’s global database and map now finds 58 fact-checking projects. That’s more than twice as many as any other country, and nearly a fifth of the current worldwide total: 304 in 84 countries.

But the U.S. is not driving the worldwide increase.

The last U.S. presidential election sounded an alert about the effects of misinformation, especially on social media. But those concerns weren’t just “made in America.” From the 2016 Brexit vote in the U.K. to this year’s coronavirus pandemic, events around the globe have led to new fact-checking projects that call out rumors, debunk hoaxes and help the public identify falsehoods.

The current fact-checking tally is up 14 from the 290 the Lab reported in its annual fact-checking census in June.

Over the past four years, growth in the U.S. has been sluggish — at least compared with other parts of the world, where Facebook, WhatsApp and Google have provided grants and incentives to enlist fact-checkers help in thwarting misinformation on their platforms. (Disclosure: Facebook and Google also provided support for research at the Reporters’ Lab.)

By looking back at the dates when each fact-checker began publishing, we now see there were about 145 projects in 59 countries that were active at some point in 2016. Of that 145, about a third were based in the United States.

The global total more than doubled from 2016 to now. And the number outside the U.S. increased two and half times — from 97 to 246.

During that same four years, there were relatively big increases elsewhere. Several countries in Asia saw big growth spurts — including Indonesia (which went from 3 fact-checkers to 9), South Korea (3 to 11) and India (3 to 21).

In comparison, the U.S. count in that period is up from 48 to 58.

The comparison is also striking when counting the fact-checkers by continent. The number in South America doubled while the counts for Africa and Asia more than tripled. The North American count was up too — by a third. But the non-U.S. increase in North America was more in line with the pace elsewhere, nearly tripling from 5 to 14.

These global tallies leave out 19 other fact-checkers that launched since 2016 that are no longer active. Among those 19 were short-lived, election-focused initiatives, sometimes involving multiple news partners, in France, Norway, Mexico, Sweden, Nigeria, Philippines, Argentina and the European Union.

Several factors seem to account for the slower growth in the U.S. For instance, many of the country’s big news media outlets have already done fact-checking for years, especially during national elections. So there is less room for fact-checking to grow at that level.

USA Today was among the only major media newcomers to the national fact-checking scene in the U.S. since 2016. The others were more niche, including The Daily Caller’s Check Your Fact, the Poynter Institute’s MediaWise Teen Fact-Checking Network and The Dispatch. In addition, the French news service AFP started a U.S.-based effort as part of its efforts to establish fact-checking teams in many of its dozens of international bureaus. The National Academies of Sciences, Engineering and Medicine also launched a fact-checking service called “Based on Science” — one of a number of science- and health-focused fact-checking projects around the world.

Of the 58 U.S. fact-checkers, 36 are focused on state and local politics, especially during regional elections. While some of these local media outlets have been at it for years, including some of PolitiFact’s longstanding state-level news partners, others work on their own, such as WISC-TV in Madison, Wisconsin, which began its News 3 Reality Check segments in 2004. There also are one-off election projects that come to an end as soon as the voting is over.

A wildcard in our Lab’s current U.S. count are efforts to increase local fact-checking across large national news chains. One such newcomer since the 2016 election is Tegna, a locally focused TV company with more than 50 stations across the country. It encourages its stations’ news teams to produce fact-checking reports as part of the company’s “Verify” initiative — though some stations do more regular fact-checking than others. Tegna also has a national fact-checking team that produces segments for use by its local stations. A few other media chains are mounting similar efforts, including some of the local stations owned by Nexstar Inc. and more than 260 newspapers and websites operated by USA Today’s owner, Gannett. Those are promising signs.

There’s still plenty of room for more local fact-checking in the U.S. At least 20 states have one or more regionally focused fact-checking projects already. The Reporters’ Lab is keeping a watchful eye out for new ventures in the other 30.

Note about our methodology: Here’s how we decide which fact-checkers to include in the Reporters’ Lab database. The Lab continually collects and new information about the fact-checkers it identifies, such as when they launched and how long they last. That’s why the updated numbers for 2016 used in this article are higher than the counts the Lab reported annual fact-checking census from February 2017. If you have questions or updates, please contact Mark Stencel or Joel Luther.

Related Links: Previous fact-checking census reports

‘It’s great to be here in Norway!’

For the kickoff of Global Fact 7, a celebration of the IFCN's community.

By Bill Adair - June 22, 2020

My opening remarks for Global Fact 7, delivered from Oslo, Norway*, on June 22, 2020.

I’m going to do something a little different this year. I’m going to fact-check my own opening remarks using this antique PolitiFact Pants on Fire button. You know Pants on Fire – it’s our rating for the most ridiculous falsehoods.

First , I want to say that it’s great to be here in Norway! (Pants on Fire!)

I love Norway because I’ve always loved the great plastic furniture I buy from your famous furniture store IKEA! (Pants on Fire!)

I am accompanied today by this Norwegian gnome, which I know is very much a symbol of your great country! (Pants on Fire!)

Okay….I’m actually here in Durham, North Carolina,, but thanks to the magic of pixels and…Baybars!…I’m with you!

First, some news…

As you probably know, every year before Global Fact, the Duke Reporters’ Lab conducts a census of fact-checking to count the world’s fact-checkers and we are out today with the number. This is the product of painstaking work by Mark Stencel, Joel Luther, Mimi Goldstein and Matthew Griffin. Our count this year is 290 fact-checking projects in 83 countries. That’s up from 188 in 60 countries a year ago.

I heard that and my first thought was that …. fact-checking keeps growing!

I should note that Mark Stencel has been working hard on this, staying up all night for the last few days to get it done. So I should reveal my secret: we pay him in coffee!

This morning, I’m going to talk briefly about community.

Back in 2014, when we started planning the first meeting of the world’s fact-checkers – in which we could all squeeze into a classroom at the London School of Economics – Tom Glaisyer of the Democracy Fund gave me some important advice. Build a community, Tom said, not an association. The way to help the fact-checking movement was to be inviting and encourage journalists to start fact-checking. We’ve done that because this meeting, and our group, keeps getting larger. You could even say that … fact-checking keeps growing!

And as a bonus, we also managed to establish the Code of Principles, which provides an important incentive for transparency and fairness in your fact-checking.

My favorite example of the IFCN’s spirit of community is the simplest: our email threads. They are often amazing! A fact-checker will write with a problem they are having and community members from all over the world will respond with suggestions and even help them do the work.

Did you see the amazing one a couple of months ago? Samba of Africa Check wrote about a video that claimed to show violence against Africans in China. He knew it was fake but was not sure where it was from, so he circulated the video by email. That led to a remarkable exchange.

A coordinator from Witness in the United States said the video had been posted on Reddit. suggesting it was from New York. Jacques Pezet of Liberation in France took the image and used Google Maps to find the New York intersection where it was filmed. And then Gordon Farrer, an Australian researcher, used Google Street View to identify the business – a dental office called Brace Yourself.

All of this showed up in Samba’s fact-check in Africa Check in Senegal.

Amazing! All the product of our community!

Another great example: the tremendous work by the IFCN bringing together the world’s fact-checkers to debunk falsehoods about COVID-19. The CoronaVirus Alliance has now collected more than 6,000 fact-checks. It, too, is a product of community, organized by Baybars and Cris Tardaguila.

And one more: MediaReview. You’re going to hear about it tomorrow. It grew out of some great work by the Washington Post and we’ve been working on it with Baybars and PolitiFact and FactCheck.org and fact-checkers from around the world that attended a meeting in January. It’s a new tagging system like ClaimReview that you’ll be able to use for videos and images. I’m more excited about MediaReview than anything I’ve done since PolitiFact because it could really have an impact in the battle against misinformation.

Finally, I want to give some shoutouts to two marvelous people who embody this commitment to community. Peter Cunliffe-Jones has been an amazing builder who has done extraordinary things to bring fact-checking to Africa. And Laura Zommer has been tireless helping dozens of fact-checkers get started in Latin America.

Together, they show what’s wonderful about the IFCN: they believe in our important journalism and they have given their time and energy to help it grow.

Our community grows thanks to these wonderful leaders. I look forward to sharing a glass of wine with them — and you — next year in Oslo!

Bundle up!

*Actually, these remarks were delivered from my backyard in Durham, North Carolina.

Annual census finds nearly 300 fact-checking projects around the world

Growth is fueled by politics, protests and pandemic

By Mark Stencel and - June 22, 2020

With elections, unrest and a global pandemic generating a seemingly endless supply of falsehoods, the Duke Reporters’ Lab finds at least 290 fact-checking projects are busy debunking those threats in 83 countries.

That count is up from 188 active projects in more than 60 countries a year ago, when the Reporters’ Lab issued the annual census at the Global Fact Summit in South Africa. There has been so much growth that the number of active fact-checkers added in the past year alone more than doubles the total number when the Lab began to keep track in 2014.

There has been plenty of news to keep those fact-checkers busy, including widespread protests in countries such as Chile and the United States. Events like these attract a broad range of new fact-checkers — some from well-established media companies, as well as industrious startups, good-government groups and journalism schools.

Our global database and map shows considerable growth in Asia, particularly India, where the Lab currently counts at least 20 fact-checkers at the moment. We also saw a spike in Chile that started with the nationwide unrest there last fall.

Fact-Checkers by Continent Since June 2019

Africa: 9 to 19

Asia: 35 to 75

Australia: 5 to 4

Europe: 61 to 85

North America: 60 to 69

South America: 18 to 38

But the coronavirus pandemic has been the story that has topped almost every fact-checking page in every country since February.

At least five fact-checkers on the Lab’s map already focused on public health and medical claims. One of the newest is The Healthy Indian Project, which launched last year. But the pandemic has turned almost every fact-checking operation into a team of health reporters. And the International Fact-Checking Network also has coordinated coverage through its #CoronaVirusFacts Alliance.

The pandemic has also turned IFCN’s 2020 Global Fact meeting into a virtual conference this week, instead of the in-person gathering originally planned in Oslo, Norway. And one of the themes participants will be talking about are the institutional factors that have generated more interest and attention for fact-checkers.

To combat increasing online misinformation, major digital platforms in the United States, including Facebook, WhatsApp, Google and YouTube, have provided incentives to fact-checkers, including direct contributions, competitive grants and help with technological infrastructure to increase the distribution of their work. (Disclosure: Facebook and Google separately help fund research and development projects at the Reporters’ Lab, and the Lab’s co-directors have served as judges for some grants.)

Many of these funding opportunities were specifically for signatories of the IFCN’s Code of Principles, a charter that requires independent accessors to regularly review the editorial and ethical practices of each fact-checker that wants its status verified.

A growing number of fact-checkers are also part of national and regional partnerships. These short-term collaborations can evolve into longer-term partnerships, as we’ve seen with Brazil’s Comprova, Colombia’s RedCheq and Bolivia Verifica. They also can inspire participating organizations to continue fact-checking on their own.

Over time, the Reporters’ Lab has tried to monitor these contributors and note when they have developed into fact-checkers that should be listed in their own right. That’s why our database shows considerable growth in South Korea — home to the long-standing SNU FactCheck partnership based at Seoul National University’s Institute of Communications Research.

As has been the case with each year’s census, some of the growth also comes from established fact-checkers that came to the attention of the Reporters’ Lab after last June’s census was published — offset by at least nine projects that closed down in the months that followed.

But the overall trend was still strong. Overall, 68 of the projects in the database launched since the start of 2019. And more than half of them (40 of the 68) opened for business after the 2019 census, including 11 so far in the first half of 2020. And most of them appear to have staying power. Of those 68, only four are no longer operating. And three of those were election-related collaborations that launched as intentionally short-term projects.

We also have tried to be more thorough about discerning among specific projects and outlets that are produced or distributed by different teams within the same or related organizations. The variety of strong fact-checking programs and web pages produced by variously affiliated French public broadcasters is a good example. (Here’s how we decide which fact-checkers to include in the Reporters’ Lab database.)

The increasing tally of fact-checkers, which continues a trend that started in 2014, is remarkable. While this is a challenging time for journalism in just about every country, public alarm about the effects of misinformation is driving demand for credible reporting and research — the work a growing list of fact-checkers are busy doing around the world.

The Reporters’ Lab is grateful for the contributions of student researchers Amelia Goldstein and Matthew Griffin; journalist and media/fact-checking trainer Shady Gebril; fact-checkers Enrique Núñez-Mussa of FactCheckingCL and EunRyung Chong of SNU FactCheck; and the staff of the International Fact-Checking Network. The Reporters’ Lab updates its fact-checking database throughout the year. If you have updates or information, please contact Mark Stencel and Joel Luther.

Related Links: Previous fact-checking census reports